Compare commits

91 Commits

v4.9.0-alp

...

v4.9.2-alp

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e6efd3318d | ||

|

|

95ffd710aa | ||

|

|

097bb97417 | ||

|

|

4faea8d2b8 | ||

|

|

7ecadb33d1 | ||

|

|

ce61bda223 | ||

|

|

8dba01da73 | ||

|

|

dcdad6fa39 | ||

|

|

11d080d521 | ||

|

|

2f954d2f3f | ||

|

|

a956fbca73 | ||

|

|

db7510c5eb | ||

|

|

b87cc353da | ||

|

|

ff85121546 | ||

|

|

79f9d83349 | ||

|

|

159bf17369 | ||

|

|

4512b23d4d | ||

|

|

5300ddf654 | ||

|

|

f1f0dfc691 | ||

|

|

e5acec8dc7 | ||

|

|

cb832b6305 | ||

|

|

ae9b8a2b8e | ||

|

|

d209255015 | ||

|

|

6eae841e4a | ||

|

|

75c1631670 | ||

|

|

97a182c7fd | ||

|

|

a0ad450032 | ||

|

|

74b36219e1 | ||

|

|

fc23db745c | ||

|

|

8a68de6471 | ||

|

|

1c4e0c66d5 | ||

|

|

6dcdd540b9 | ||

|

|

48233c7d55 | ||

|

|

f3ef56998d | ||

|

|

7e7269b2ba | ||

|

|

606e9505c0 | ||

|

|

1db39e8907 | ||

|

|

7f13eb4642 | ||

|

|

9a1fff74fd | ||

|

|

de87639fce | ||

|

|

f9cecfd49a | ||

|

|

70563d2bcb | ||

|

|

4ca99a6361 | ||

|

|

8f70e436cf | ||

|

|

e75d81d05a | ||

|

|

56793114d8 | ||

|

|

a7b09461be | ||

|

|

cd2cb3f6ea | ||

|

|

56f77b58c9 | ||

|

|

1d697f97d7 | ||

|

|

bb30ca4859 | ||

|

|

139e934345 | ||

|

|

bf69aa6e3d | ||

|

|

3a730a23cb | ||

|

|

75cb46796a | ||

|

|

effdb5884b | ||

|

|

9523ba92f3 | ||

|

|

2f522aff90 | ||

|

|

0dccfd176d | ||

|

|

867e8acf27 | ||

|

|

36da8c862f | ||

|

|

b50cf49cc7 | ||

|

|

2270e149eb | ||

|

|

4957bdcba1 | ||

|

|

bca5cf738a | ||

|

|

c35bb5841c | ||

|

|

6e045093b1 | ||

|

|

a1b114e426 | ||

|

|

54fde7630c | ||

|

|

467c408ad7 | ||

|

|

c005a94454 | ||

|

|

c8a35822d6 | ||

|

|

d05259dedd | ||

|

|

8980664b8a | ||

|

|

43f30b3790 | ||

|

|

3ddbb37612 | ||

|

|

7c419a26b3 | ||

|

|

e131465d25 | ||

|

|

a345e56508 | ||

|

|

32ce032995 | ||

|

|

0bc075aa4e | ||

|

|

3e3f2165db | ||

|

|

e1aa068858 | ||

|

|

e98d6f1d30 | ||

|

|

54eb5c0547 | ||

|

|

adf5377ebe | ||

|

|

08b6f594df | ||

|

|

90d13ee3df | ||

|

|

5c718abd50 | ||

|

|

2d351c3654 | ||

|

|

662a4a4671 |

2

.github/workflows/docs-deploy-kubeconfig.yml

vendored

@@ -6,8 +6,6 @@ on:

|

|||||||

- 'docSite/**'

|

- 'docSite/**'

|

||||||

branches:

|

branches:

|

||||||

- 'main'

|

- 'main'

|

||||||

tags:

|

|

||||||

- 'v*.*.*'

|

|

||||||

|

|

||||||

jobs:

|

jobs:

|

||||||

build-fastgpt-docs-images:

|

build-fastgpt-docs-images:

|

||||||

|

|||||||

2

.github/workflows/docs-deploy-vercel.yml

vendored

@@ -7,8 +7,6 @@ on:

|

|||||||

- 'docSite/**'

|

- 'docSite/**'

|

||||||

branches:

|

branches:

|

||||||

- 'main'

|

- 'main'

|

||||||

tags:

|

|

||||||

- 'v*.*.*'

|

|

||||||

|

|

||||||

# A workflow run is made up of one or more jobs that can run sequentially or in parallel

|

# A workflow run is made up of one or more jobs that can run sequentially or in parallel

|

||||||

jobs:

|

jobs:

|

||||||

|

|||||||

2

.github/workflows/docs-preview.yml

vendored

@@ -4,8 +4,6 @@ on:

|

|||||||

pull_request_target:

|

pull_request_target:

|

||||||

paths:

|

paths:

|

||||||

- 'docSite/**'

|

- 'docSite/**'

|

||||||

branches:

|

|

||||||

- 'main'

|

|

||||||

workflow_dispatch:

|

workflow_dispatch:

|

||||||

|

|

||||||

# A workflow run is made up of one or more jobs that can run sequentially or in parallel

|

# A workflow run is made up of one or more jobs that can run sequentially or in parallel

|

||||||

|

|||||||

3

.github/workflows/fastgpt-preview-image.yml

vendored

@@ -1,9 +1,6 @@

|

|||||||

name: Preview FastGPT images

|

name: Preview FastGPT images

|

||||||

on:

|

on:

|

||||||

pull_request_target:

|

pull_request_target:

|

||||||

paths:

|

|

||||||

- 'projects/app/**'

|

|

||||||

- 'packages/**'

|

|

||||||

workflow_dispatch:

|

workflow_dispatch:

|

||||||

|

|

||||||

jobs:

|

jobs:

|

||||||

|

|||||||

29

.github/workflows/fastgpt-test.yaml

vendored

Normal file

@@ -0,0 +1,29 @@

|

|||||||

|

name: 'FastGPT-Test'

|

||||||

|

on:

|

||||||

|

pull_request:

|

||||||

|

workflow_dispatch:

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

test:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

|

||||||

|

permissions:

|

||||||

|

# Required to checkout the code

|

||||||

|

contents: read

|

||||||

|

# Required to put a comment into the pull-request

|

||||||

|

pull-requests: write

|

||||||

|

|

||||||

|

steps:

|

||||||

|

- uses: actions/checkout@v4

|

||||||

|

- uses: pnpm/action-setup@v4

|

||||||

|

with:

|

||||||

|

version: 10

|

||||||

|

- name: 'Install Deps'

|

||||||

|

run: pnpm install

|

||||||

|

- name: 'Test'

|

||||||

|

run: pnpm run test

|

||||||

|

- name: 'Report Coverage'

|

||||||

|

# Set if: always() to also generate the report if tests are failing

|

||||||

|

# Only works if you set `reportOnFailure: true` in your vite config as specified above

|

||||||

|

if: always()

|

||||||

|

uses: davelosert/vitest-coverage-report-action@v2

|

||||||

1

.gitignore

vendored

@@ -44,3 +44,4 @@ files/helm/fastgpt/fastgpt-0.1.0.tgz

|

|||||||

files/helm/fastgpt/charts/*.tgz

|

files/helm/fastgpt/charts/*.tgz

|

||||||

|

|

||||||

tmp/

|

tmp/

|

||||||

|

coverage

|

||||||

|

|||||||

@@ -5,4 +5,6 @@ node_modules

|

|||||||

docSite/

|

docSite/

|

||||||

*.md

|

*.md

|

||||||

|

|

||||||

cl100l_base.ts

|

pnpm-lock.yaml

|

||||||

|

cl100l_base.ts

|

||||||

|

dict.json

|

||||||

7

.vscode/i18n-ally-custom-framework.yml

vendored

@@ -17,15 +17,8 @@ usageMatchRegex:

|

|||||||

# you can ignore it and use your own matching rules as well

|

# you can ignore it and use your own matching rules as well

|

||||||

- "[^\\w\\d]t\\(['\"`]({key})['\"`]"

|

- "[^\\w\\d]t\\(['\"`]({key})['\"`]"

|

||||||

- "[^\\w\\d]commonT\\(['\"`]({key})['\"`]"

|

- "[^\\w\\d]commonT\\(['\"`]({key})['\"`]"

|

||||||

# 支持 appT("your.i18n.keys")

|

|

||||||

- "[^\\w\\d]appT\\(['\"`]({key})['\"`]"

|

|

||||||

# 支持 datasetT("your.i18n.keys")

|

|

||||||

- "[^\\w\\d]datasetT\\(['\"`]({key})['\"`]"

|

|

||||||

- "[^\\w\\d]fileT\\(['\"`]({key})['\"`]"

|

- "[^\\w\\d]fileT\\(['\"`]({key})['\"`]"

|

||||||

- "[^\\w\\d]publishT\\(['\"`]({key})['\"`]"

|

|

||||||

- "[^\\w\\d]workflowT\\(['\"`]({key})['\"`]"

|

- "[^\\w\\d]workflowT\\(['\"`]({key})['\"`]"

|

||||||

- "[^\\w\\d]userT\\(['\"`]({key})['\"`]"

|

|

||||||

- "[^\\w\\d]chatT\\(['\"`]({key})['\"`]"

|

|

||||||

- "[^\\w\\d]i18nT\\(['\"`]({key})['\"`]"

|

- "[^\\w\\d]i18nT\\(['\"`]({key})['\"`]"

|

||||||

|

|

||||||

# A RegEx to set a custom scope range. This scope will be used as a prefix when detecting keys

|

# A RegEx to set a custom scope range. This scope will be used as a prefix when detecting keys

|

||||||

|

|||||||

@@ -129,7 +129,8 @@ https://github.com/labring/FastGPT/assets/15308462/7d3a38df-eb0e-4388-9250-2409b

|

|||||||

</a>

|

</a>

|

||||||

|

|

||||||

## 🌿 第三方生态

|

## 🌿 第三方生态

|

||||||

|

- [PPIO 派欧云:一键调用高性价比的开源模型 API 和 GPU 容器](https://ppinfra.com/user/register?invited_by=VITYVU&utm_source=github_fastgpt)

|

||||||

|

- [AI Proxy:国内模型聚合服务](https://sealos.run/aiproxy/?k=fastgpt-github/)

|

||||||

- [SiliconCloud (硅基流动) —— 开源模型在线体验平台](https://cloud.siliconflow.cn/i/TR9Ym0c4)

|

- [SiliconCloud (硅基流动) —— 开源模型在线体验平台](https://cloud.siliconflow.cn/i/TR9Ym0c4)

|

||||||

- [COW 个人微信/企微机器人](https://doc.tryfastgpt.ai/docs/use-cases/external-integration/onwechat/)

|

- [COW 个人微信/企微机器人](https://doc.tryfastgpt.ai/docs/use-cases/external-integration/onwechat/)

|

||||||

|

|

||||||

|

|||||||

@@ -69,7 +69,7 @@ Project tech stack: NextJs + TS + ChakraUI + MongoDB + PostgreSQL (PG Vector plu

|

|||||||

|

|

||||||

> When using [Sealos](https://sealos.io) services, there is no need to purchase servers or domain names. It supports high concurrency and dynamic scaling, and the database application uses the kubeblocks database, which far exceeds the simple Docker container deployment in terms of IO performance.

|

> When using [Sealos](https://sealos.io) services, there is no need to purchase servers or domain names. It supports high concurrency and dynamic scaling, and the database application uses the kubeblocks database, which far exceeds the simple Docker container deployment in terms of IO performance.

|

||||||

<div align="center">

|

<div align="center">

|

||||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy%3FtemplateName%3Dfastgpt)

|

[](https://cloud.sealos.io/?openapp=system-fastdeploy%3FtemplateName%3Dfastgpt&uid=fnWRt09fZP)

|

||||||

</div>

|

</div>

|

||||||

|

|

||||||

Give it a 2-4 minute wait after deployment as it sets up the database. Initially, it might be a too slow since we're using the basic settings.

|

Give it a 2-4 minute wait after deployment as it sets up the database. Initially, it might be a too slow since we're using the basic settings.

|

||||||

|

|||||||

@@ -94,7 +94,7 @@ https://github.com/labring/FastGPT/assets/15308462/7d3a38df-eb0e-4388-9250-2409b

|

|||||||

|

|

||||||

- **⚡ デプロイ**

|

- **⚡ デプロイ**

|

||||||

|

|

||||||

[](https://cloud.sealos.io/?openapp=system-fastdeploy%3FtemplateName%3Dfastgpt)

|

[](https://cloud.sealos.io/?openapp=system-fastdeploy%3FtemplateName%3Dfastgpt&uid=fnWRt09fZP)

|

||||||

|

|

||||||

デプロイ 後、データベースをセットアップするので、2~4分待 ってください。基本設定 を 使 っているので、最初 は 少 し 遅 いかもしれません。

|

デプロイ 後、データベースをセットアップするので、2~4分待 ってください。基本設定 を 使 っているので、最初 は 少 し 遅 いかもしれません。

|

||||||

|

|

||||||

|

|||||||

@@ -100,7 +100,7 @@ services:

|

|||||||

exec docker-entrypoint.sh "$$@" &

|

exec docker-entrypoint.sh "$$@" &

|

||||||

|

|

||||||

# 等待MongoDB服务启动

|

# 等待MongoDB服务启动

|

||||||

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')" > /dev/null 2>&1; do

|

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')"; do

|

||||||

echo "Waiting for MongoDB to start..."

|

echo "Waiting for MongoDB to start..."

|

||||||

sleep 2

|

sleep 2

|

||||||

done

|

done

|

||||||

@@ -114,15 +114,15 @@ services:

|

|||||||

# fastgpt

|

# fastgpt

|

||||||

sandbox:

|

sandbox:

|

||||||

container_name: sandbox

|

container_name: sandbox

|

||||||

image: ghcr.io/labring/fastgpt-sandbox:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt-sandbox:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.1-fix2 # 阿里云

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

restart: always

|

restart: always

|

||||||

fastgpt:

|

fastgpt:

|

||||||

container_name: fastgpt

|

container_name: fastgpt

|

||||||

image: ghcr.io/labring/fastgpt:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.1-fix2 # 阿里云

|

||||||

ports:

|

ports:

|

||||||

- 3000:3000

|

- 3000:3000

|

||||||

networks:

|

networks:

|

||||||

@@ -175,14 +175,13 @@ services:

|

|||||||

|

|

||||||

# AI Proxy

|

# AI Proxy

|

||||||

aiproxy:

|

aiproxy:

|

||||||

image: 'ghcr.io/labring/sealos-aiproxy-service:latest'

|

image: ghcr.io/labring/aiproxy:v0.1.3

|

||||||

|

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||||

container_name: aiproxy

|

container_name: aiproxy

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

depends_on:

|

depends_on:

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

condition: service_healthy

|

condition: service_healthy

|

||||||

ports:

|

|

||||||

- '3002:3000'

|

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

environment:

|

environment:

|

||||||

@@ -193,7 +192,7 @@ services:

|

|||||||

# 数据库连接地址

|

# 数据库连接地址

|

||||||

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

||||||

# 最大重试次数

|

# 最大重试次数

|

||||||

- RetryTimes=3

|

- RETRY_TIMES=3

|

||||||

# 不需要计费

|

# 不需要计费

|

||||||

- BILLING_ENABLED=false

|

- BILLING_ENABLED=false

|

||||||

# 不需要严格检测模型

|

# 不需要严格检测模型

|

||||||

@@ -204,8 +203,8 @@ services:

|

|||||||

timeout: 5s

|

timeout: 5s

|

||||||

retries: 10

|

retries: 10

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

# image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

||||||

image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

container_name: aiproxy_pg

|

container_name: aiproxy_pg

|

||||||

volumes:

|

volumes:

|

||||||

|

|||||||

@@ -28,8 +28,8 @@ services:

|

|||||||

# image: mongo:4.4.29 # cpu不支持AVX时候使用

|

# image: mongo:4.4.29 # cpu不支持AVX时候使用

|

||||||

container_name: mongo

|

container_name: mongo

|

||||||

restart: always

|

restart: always

|

||||||

ports:

|

# ports:

|

||||||

- 27017:27017

|

# - 27017:27017

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

command: mongod --keyFile /data/mongodb.key --replSet rs0

|

command: mongod --keyFile /data/mongodb.key --replSet rs0

|

||||||

@@ -58,7 +58,7 @@ services:

|

|||||||

exec docker-entrypoint.sh "$$@" &

|

exec docker-entrypoint.sh "$$@" &

|

||||||

|

|

||||||

# 等待MongoDB服务启动

|

# 等待MongoDB服务启动

|

||||||

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')" > /dev/null 2>&1; do

|

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')"; do

|

||||||

echo "Waiting for MongoDB to start..."

|

echo "Waiting for MongoDB to start..."

|

||||||

sleep 2

|

sleep 2

|

||||||

done

|

done

|

||||||

@@ -72,15 +72,15 @@ services:

|

|||||||

# fastgpt

|

# fastgpt

|

||||||

sandbox:

|

sandbox:

|

||||||

container_name: sandbox

|

container_name: sandbox

|

||||||

image: ghcr.io/labring/fastgpt-sandbox:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt-sandbox:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.1-fix2 # 阿里云

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

restart: always

|

restart: always

|

||||||

fastgpt:

|

fastgpt:

|

||||||

container_name: fastgpt

|

container_name: fastgpt

|

||||||

image: ghcr.io/labring/fastgpt:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.1-fix2 # 阿里云

|

||||||

ports:

|

ports:

|

||||||

- 3000:3000

|

- 3000:3000

|

||||||

networks:

|

networks:

|

||||||

@@ -132,14 +132,13 @@ services:

|

|||||||

|

|

||||||

# AI Proxy

|

# AI Proxy

|

||||||

aiproxy:

|

aiproxy:

|

||||||

image: 'ghcr.io/labring/sealos-aiproxy-service:latest'

|

image: ghcr.io/labring/aiproxy:v0.1.3

|

||||||

|

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||||

container_name: aiproxy

|

container_name: aiproxy

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

depends_on:

|

depends_on:

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

condition: service_healthy

|

condition: service_healthy

|

||||||

ports:

|

|

||||||

- '3002:3000'

|

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

environment:

|

environment:

|

||||||

@@ -150,7 +149,7 @@ services:

|

|||||||

# 数据库连接地址

|

# 数据库连接地址

|

||||||

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

||||||

# 最大重试次数

|

# 最大重试次数

|

||||||

- RetryTimes=3

|

- RETRY_TIMES=3

|

||||||

# 不需要计费

|

# 不需要计费

|

||||||

- BILLING_ENABLED=false

|

- BILLING_ENABLED=false

|

||||||

# 不需要严格检测模型

|

# 不需要严格检测模型

|

||||||

@@ -161,8 +160,8 @@ services:

|

|||||||

timeout: 5s

|

timeout: 5s

|

||||||

retries: 10

|

retries: 10

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

# image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

||||||

image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

container_name: aiproxy_pg

|

container_name: aiproxy_pg

|

||||||

volumes:

|

volumes:

|

||||||

|

|||||||

@@ -41,7 +41,7 @@ services:

|

|||||||

exec docker-entrypoint.sh "$$@" &

|

exec docker-entrypoint.sh "$$@" &

|

||||||

|

|

||||||

# 等待MongoDB服务启动

|

# 等待MongoDB服务启动

|

||||||

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')" > /dev/null 2>&1; do

|

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')"; do

|

||||||

echo "Waiting for MongoDB to start..."

|

echo "Waiting for MongoDB to start..."

|

||||||

sleep 2

|

sleep 2

|

||||||

done

|

done

|

||||||

@@ -53,15 +53,15 @@ services:

|

|||||||

wait $$!

|

wait $$!

|

||||||

sandbox:

|

sandbox:

|

||||||

container_name: sandbox

|

container_name: sandbox

|

||||||

image: ghcr.io/labring/fastgpt-sandbox:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt-sandbox:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.1-fix2 # 阿里云

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

restart: always

|

restart: always

|

||||||

fastgpt:

|

fastgpt:

|

||||||

container_name: fastgpt

|

container_name: fastgpt

|

||||||

image: ghcr.io/labring/fastgpt:v4.8.23-fix # git

|

image: ghcr.io/labring/fastgpt:v4.9.1-fix2 # git

|

||||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.8.23-fix # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.1-fix2 # 阿里云

|

||||||

ports:

|

ports:

|

||||||

- 3000:3000

|

- 3000:3000

|

||||||

networks:

|

networks:

|

||||||

@@ -113,14 +113,13 @@ services:

|

|||||||

|

|

||||||

# AI Proxy

|

# AI Proxy

|

||||||

aiproxy:

|

aiproxy:

|

||||||

image: 'ghcr.io/labring/sealos-aiproxy-service:latest'

|

image: ghcr.io/labring/aiproxy:v0.1.3

|

||||||

|

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||||

container_name: aiproxy

|

container_name: aiproxy

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

depends_on:

|

depends_on:

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

condition: service_healthy

|

condition: service_healthy

|

||||||

ports:

|

|

||||||

- '3002:3000'

|

|

||||||

networks:

|

networks:

|

||||||

- fastgpt

|

- fastgpt

|

||||||

environment:

|

environment:

|

||||||

@@ -131,7 +130,7 @@ services:

|

|||||||

# 数据库连接地址

|

# 数据库连接地址

|

||||||

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

||||||

# 最大重试次数

|

# 最大重试次数

|

||||||

- RetryTimes=3

|

- RETRY_TIMES=3

|

||||||

# 不需要计费

|

# 不需要计费

|

||||||

- BILLING_ENABLED=false

|

- BILLING_ENABLED=false

|

||||||

# 不需要严格检测模型

|

# 不需要严格检测模型

|

||||||

@@ -142,8 +141,8 @@ services:

|

|||||||

timeout: 5s

|

timeout: 5s

|

||||||

retries: 10

|

retries: 10

|

||||||

aiproxy_pg:

|

aiproxy_pg:

|

||||||

# image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

||||||

image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

||||||

restart: unless-stopped

|

restart: unless-stopped

|

||||||

container_name: aiproxy_pg

|

container_name: aiproxy_pg

|

||||||

volumes:

|

volumes:

|

||||||

|

|||||||

BIN

docSite/assets/imgs/Ollama-aiproxy1.png

Normal file

|

After Width: | Height: | Size: 68 KiB |

BIN

docSite/assets/imgs/Ollama-aiproxy2.png

Normal file

|

After Width: | Height: | Size: 9.0 KiB |

BIN

docSite/assets/imgs/Ollama-aiproxy3.png

Normal file

|

After Width: | Height: | Size: 179 KiB |

BIN

docSite/assets/imgs/Ollama-direct1.png

Normal file

|

After Width: | Height: | Size: 72 KiB |

BIN

docSite/assets/imgs/Ollama-models1.png

Normal file

|

After Width: | Height: | Size: 20 KiB |

BIN

docSite/assets/imgs/Ollama-models2.png

Normal file

|

After Width: | Height: | Size: 138 KiB |

BIN

docSite/assets/imgs/Ollama-models3.png

Normal file

|

After Width: | Height: | Size: 122 KiB |

BIN

docSite/assets/imgs/Ollama-models4.png

Normal file

|

After Width: | Height: | Size: 124 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi1.png

Normal file

|

After Width: | Height: | Size: 94 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi2.png

Normal file

|

After Width: | Height: | Size: 57 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi3 .png

Normal file

|

After Width: | Height: | Size: 76 KiB |

BIN

docSite/assets/imgs/Ollama-pull.png

Normal file

|

After Width: | Height: | Size: 26 KiB |

|

After Width: | Height: | Size: 170 KiB |

|

After Width: | Height: | Size: 102 KiB |

|

After Width: | Height: | Size: 70 KiB |

|

After Width: | Height: | Size: 89 KiB |

|

After Width: | Height: | Size: 87 KiB |

@@ -44,7 +44,7 @@ weight: 707

|

|||||||

|

|

||||||

#### 1. 申请 Sealos AI proxy API Key

|

#### 1. 申请 Sealos AI proxy API Key

|

||||||

|

|

||||||

[点击打开 Sealos Pdf parser 官网](https://cloud.sealos.run/?uid=fnWRt09fZP&openapp=system-aiproxy),并进行对应 API Key 的申请。

|

[点击打开 Sealos Pdf parser 官网](https://hzh.sealos.run/?uid=fnWRt09fZP&openapp=system-aiproxy),并进行对应 API Key 的申请。

|

||||||

|

|

||||||

#### 2. 修改 FastGPT 配置文件

|

#### 2. 修改 FastGPT 配置文件

|

||||||

|

|

||||||

|

|||||||

@@ -24,10 +24,9 @@ PDF 是一个相对复杂的文件格式,在 FastGPT 内置的 pdf 解析器

|

|||||||

这里介绍快速 Docker 安装的方法:

|

这里介绍快速 Docker 安装的方法:

|

||||||

|

|

||||||

```dockerfile

|

```dockerfile

|

||||||

docker pull crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:latest

|

docker pull crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:v0.2

|

||||||

docker run --gpus all -itd -p 7231:7231 --name model_pdf_v1 crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:latest

|

docker run --gpus all -itd -p 7231:7232 --name model_pdf_v2 -e PROCESSES_PER_GPU="2" crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:v0.2

|

||||||

```

|

```

|

||||||

|

|

||||||

### 2. 添加 FastGPT 文件配置

|

### 2. 添加 FastGPT 文件配置

|

||||||

|

|

||||||

```json

|

```json

|

||||||

@@ -36,7 +35,7 @@ docker run --gpus all -itd -p 7231:7231 --name model_pdf_v1 crpi-h3snc261q1dosro

|

|||||||

"systemEnv": {

|

"systemEnv": {

|

||||||

xxx

|

xxx

|

||||||

"customPdfParse": {

|

"customPdfParse": {

|

||||||

"url": "http://xxxx.com/v1/parse/file", // 自定义 PDF 解析服务地址

|

"url": "http://xxxx.com/v2/parse/file", // 自定义 PDF 解析服务地址 marker v0.2

|

||||||

"key": "", // 自定义 PDF 解析服务密钥

|

"key": "", // 自定义 PDF 解析服务密钥

|

||||||

"doc2xKey": "", // doc2x 服务密钥

|

"doc2xKey": "", // doc2x 服务密钥

|

||||||

"price": 0 // PDF 解析服务价格

|

"price": 0 // PDF 解析服务价格

|

||||||

@@ -80,4 +79,25 @@ docker run --gpus all -itd -p 7231:7231 --name model_pdf_v1 crpi-h3snc261q1dosro

|

|||||||

|

|

||||||

上图是分块后的结果,下图是 pdf 原文。整体图片、公式、表格都可以提取出来,效果还是杠杠的。

|

上图是分块后的结果,下图是 pdf 原文。整体图片、公式、表格都可以提取出来,效果还是杠杠的。

|

||||||

|

|

||||||

不过要注意的是,[Marker](https://github.com/VikParuchuri/marker) 的协议是`GPL-3.0 license`,请在遵守协议的前提下使用。

|

不过要注意的是,[Marker](https://github.com/VikParuchuri/marker) 的协议是`GPL-3.0 license`,请在遵守协议的前提下使用。

|

||||||

|

|

||||||

|

## 旧版 Marker 使用方法

|

||||||

|

|

||||||

|

FastGPT V4.9.0 版本之前,可以用以下方式,试用 Marker 解析服务。

|

||||||

|

|

||||||

|

安装和运行 Marker 服务:

|

||||||

|

|

||||||

|

```dockerfile

|

||||||

|

docker pull crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:v0.1

|

||||||

|

docker run --gpus all -itd -p 7231:7231 --name model_pdf_v1 -e PROCESSES_PER_GPU="2" crpi-h3snc261q1dosroc.cn-hangzhou.personal.cr.aliyuncs.com/marker11/marker_images:v0.1

|

||||||

|

```

|

||||||

|

|

||||||

|

并修改 FastGPT 环境变量:

|

||||||

|

|

||||||

|

```

|

||||||

|

CUSTOM_READ_FILE_URL=http://xxxx.com/v1/parse/file

|

||||||

|

CUSTOM_READ_FILE_EXTENSION=pdf

|

||||||

|

```

|

||||||

|

|

||||||

|

* CUSTOM_READ_FILE_URL - 自定义解析服务的地址, host改成解析服务的访问地址,path 不能变动。

|

||||||

|

* CUSTOM_READ_FILE_EXTENSION - 支持的文件后缀,多个文件类型,可用逗号隔开。

|

||||||

184

docSite/content/zh-cn/docs/development/custom-models/ollama.md

Normal file

@@ -0,0 +1,184 @@

|

|||||||

|

---

|

||||||

|

title: '使用 Ollama 接入本地模型 '

|

||||||

|

description: ' 采用 Ollama 部署自己的模型'

|

||||||

|

icon: 'api'

|

||||||

|

draft: false

|

||||||

|

toc: true

|

||||||

|

weight: 950

|

||||||

|

---

|

||||||

|

|

||||||

|

[Ollama](https://ollama.com/) 是一个开源的AI大模型部署工具,专注于简化大语言模型的部署和使用,支持一键下载和运行各种大模型。

|

||||||

|

|

||||||

|

## 安装 Ollama

|

||||||

|

|

||||||

|

Ollama 本身支持多种安装方式,但是推荐使用 Docker 拉取镜像部署。如果是个人设备上安装了 Ollama 后续需要解决如何让 Docker 中 FastGPT 容器访问宿主机 Ollama的问题,较为麻烦。

|

||||||

|

|

||||||

|

### Docker 安装(推荐)

|

||||||

|

|

||||||

|

你可以使用 Ollama 官方的 Docker 镜像来一键安装和启动 Ollama 服务(确保你的机器上已经安装了 Docker),命令如下:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

docker pull ollama/ollama

|

||||||

|

docker run --rm -d --name ollama -p 11434:11434 ollama/ollama

|

||||||

|

```

|

||||||

|

|

||||||

|

如果你的 FastGPT 是在 Docker 中进行部署的,建议在拉取 Ollama 镜像时保证和 FastGPT 镜像处于同一网络,否则可能出现 FastGPT 无法访问的问题,命令如下:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

docker run --rm -d --name ollama --network (你的 Fastgpt 容器所在网络) -p 11434:11434 ollama/ollama

|

||||||

|

```

|

||||||

|

|

||||||

|

### 主机安装

|

||||||

|

|

||||||

|

如果你不想使用 Docker ,也可以采用主机安装,以下是主机安装的一些方式。

|

||||||

|

|

||||||

|

#### MacOS

|

||||||

|

|

||||||

|

如果你使用的是 macOS,且系统中已经安装了 Homebrew 包管理器,可通过以下命令来安装 Ollama:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

brew install ollama

|

||||||

|

ollama serve #安装完成后,使用该命令启动服务

|

||||||

|

```

|

||||||

|

|

||||||

|

#### Linux

|

||||||

|

|

||||||

|

在 Linux 系统上,你可以借助包管理器来安装 Ollama。以 Ubuntu 为例,在终端执行以下命令:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

curl https://ollama.com/install.sh | sh #此命令会从官方网站下载并执行安装脚本。

|

||||||

|

ollama serve #安装完成后,同样启动服务

|

||||||

|

```

|

||||||

|

|

||||||

|

#### Windows

|

||||||

|

|

||||||

|

在 Windows 系统中,你可以从 Ollama 官方网站 下载 Windows 版本的安装程序。下载完成后,运行安装程序,按照安装向导的提示完成安装。安装完成后,在命令提示符或 PowerShell 中启动服务:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

ollama serve #安装完成并启动服务后,你可以在浏览器中访问 http://localhost:11434 来验证 Ollama 是否安装成功。

|

||||||

|

```

|

||||||

|

|

||||||

|

#### 补充说明

|

||||||

|

|

||||||

|

如果你是采用的主机应用 Ollama 而不是镜像,需要确保你的 Ollama 可以监听0.0.0.0。

|

||||||

|

|

||||||

|

##### 1. Linxu 系统

|

||||||

|

|

||||||

|

如果 Ollama 作为 systemd 服务运行,打开终端,编辑 Ollama 的 systemd 服务文件,使用命令sudo systemctl edit ollama.service,在[Service]部分添加Environment="OLLAMA_HOST=0.0.0.0"。保存并退出编辑器,然后执行sudo systemctl daemon - reload和sudo systemctl restart ollama使配置生效。

|

||||||

|

|

||||||

|

##### 2. MacOS 系统

|

||||||

|

|

||||||

|

打开终端,使用launchctl setenv ollama_host "0.0.0.0"命令设置环境变量,然后重启 Ollama 应用程序以使更改生效。

|

||||||

|

|

||||||

|

##### 3. Windows 系统

|

||||||

|

|

||||||

|

通过 “开始” 菜单或搜索栏打开 “编辑系统环境变量”,在 “系统属性” 窗口中点击 “环境变量”,在 “系统变量” 部分点击 “新建”,创建一个名为OLLAMA_HOST的变量,变量值设置为0.0.0.0,点击 “确定” 保存更改,最后从 “开始” 菜单重启 Ollama 应用程序。

|

||||||

|

|

||||||

|

### Ollama 拉取模型镜像

|

||||||

|

|

||||||

|

在安装后 Ollama 后,本地是没有模型镜像的,需要自己去拉取 Ollama 中的模型镜像。命令如下:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# Docker 部署需要先进容器,命令为: docker exec -it < Ollama 容器名 > /bin/sh

|

||||||

|

ollama pull <模型名>

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### 测试通信

|

||||||

|

|

||||||

|

在安装完成后,需要进行检测测试,首先进入 FastGPT 所在的容器,尝试访问自己的 Ollama ,命令如下:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

docker exec -it < FastGPT 所在的容器名 > /bin/sh

|

||||||

|

curl http://XXX.XXX.XXX.XXX:11434 #容器部署地址为“http://<容器名>:<端口>”,主机安装地址为"http://<主机IP>:<端口>",主机IP不可为localhost

|

||||||

|

```

|

||||||

|

|

||||||

|

看到访问显示自己的 Ollama 服务以及启动,说明可以正常通信。

|

||||||

|

|

||||||

|

## 将 Ollama 接入 FastGPT

|

||||||

|

|

||||||

|

### 1. 查看 Ollama 所拥有的模型

|

||||||

|

|

||||||

|

首先采用下述命令查看 Ollama 中所拥有的模型,

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# Docker 部署 Ollama,需要此命令 docker exec -it < Ollama 容器名 > /bin/sh

|

||||||

|

ollama ls

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### 2. AI Proxy 接入

|

||||||

|

|

||||||

|

如果你采用的是 FastGPT 中的默认配置文件部署[这里](/docs/development/docker.md),即默认采用 AI Proxy 进行启动。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

以及在确保你的 FastGPT 可以直接访问 Ollama 容器的情况下,无法访问,参考上文[点此跳转](#安装-ollama)的安装过程,检测是不是主机不能监测0.0.0.0,或者容器不在同一个网络。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

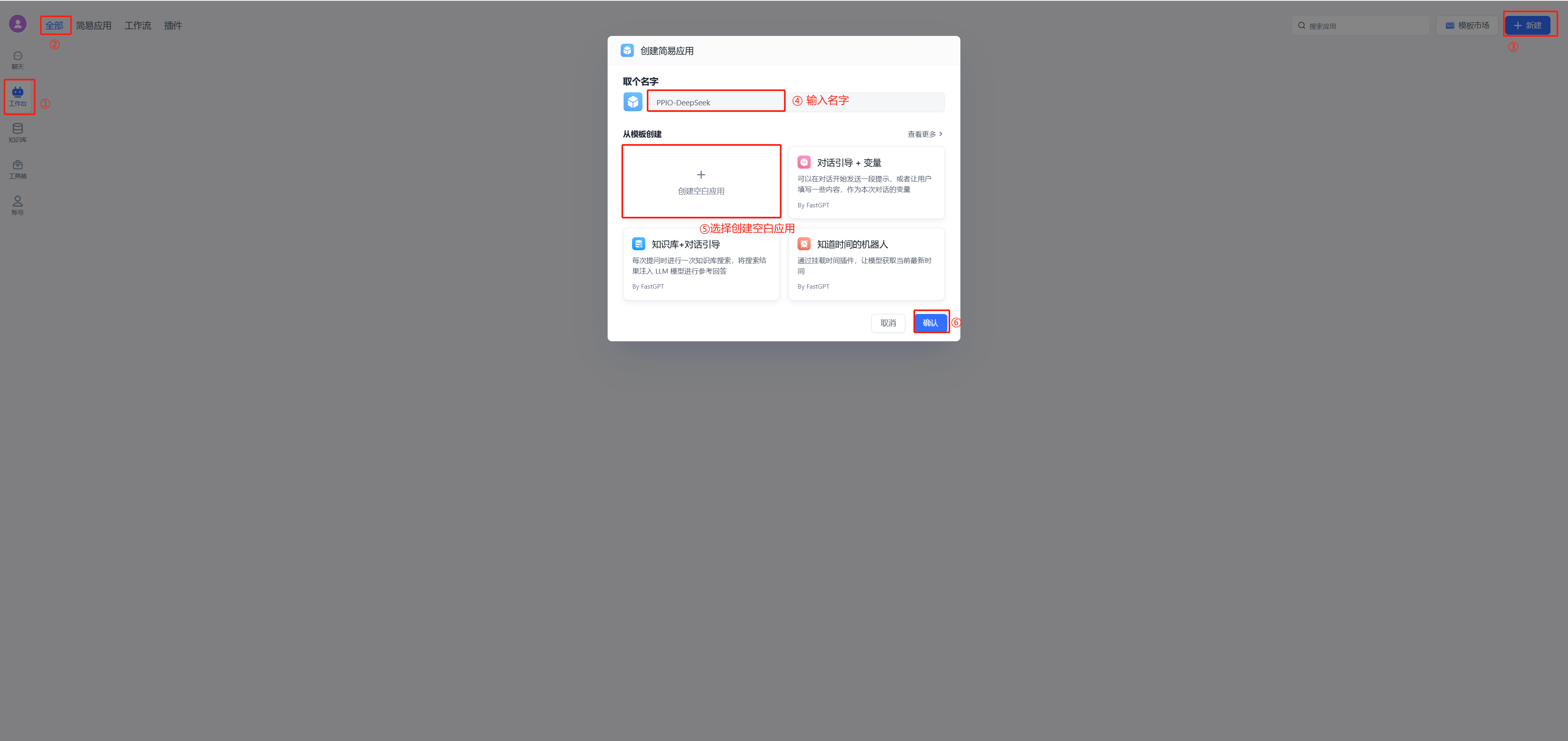

在 FastGPT 中点击账号->模型提供商->模型配置->新增模型,添加自己的模型即可,添加模型时需要保证模型ID和 OneAPI 中的模型名称一致。详细参考[这里](/docs/development/modelConfig/intro.md)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

运行 FastGPT ,在页面中选择账号->模型提供商->模型渠道->新增渠道。之后,在渠道选择中选择 Ollama ,然后加入自己拉取的模型,填入代理地址,如果是容器中安装 Ollama ,代理地址为http://地址:端口,补充:容器部署地址为“http://<容器名>:<端口>”,主机安装地址为"http://<主机IP>:<端口>",主机IP不可为localhost

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

在工作台中创建一个应用,选择自己之前添加的模型,此处模型名称为自己当时设置的别名。注:同一个模型无法多次添加,系统会采取最新添加时设置的别名。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### 3. OneAPI 接入

|

||||||

|

|

||||||

|

如果你想使用 OneAPI ,首先需要拉取 OneAPI 镜像,然后将其在 FastGPT 容器的网络中运行。具体命令如下:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# 拉取 oneAPI 镜像

|

||||||

|

docker pull intel/oneapi-hpckit

|

||||||

|

|

||||||

|

# 运行容器并指定自定义网络和容器名

|

||||||

|

docker run -it --network < FastGPT 网络 > --name 容器名 intel/oneapi-hpckit /bin/bash

|

||||||

|

```

|

||||||

|

|

||||||

|

进入 OneAPI 页面,添加新的渠道,类型选择 Ollama ,在模型中填入自己 Ollama 中的模型,需要保证添加的模型名称和 Ollama 中一致,再在下方填入自己的 Ollama 代理地址,默认http://地址:端口,不需要填写/v1。添加成功后在 OneAPI 进行渠道测试,测试成功则说明添加成功。此处演示采用的是 Docker 部署 Ollama 的效果,主机 Ollama需要修改代理地址为http://<主机IP>:<端口>

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

渠道添加成功后,点击令牌,点击添加令牌,填写名称,修改配置。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

修改部署 FastGPT 的 docker-compose.yml 文件,在其中将 AI Proxy 的使用注释,在 OPENAI_BASE_URL 中加入自己的 OneAPI 开放地址,默认是http://地址:端口/v1,v1必须填写。KEY 中填写自己在 OneAPI 的令牌。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

[直接跳转5](#5-模型添加和使用)添加模型,并使用。

|

||||||

|

|

||||||

|

### 4. 直接接入

|

||||||

|

|

||||||

|

如果你既不想使用 AI Proxy,也不想使用 OneAPI,也可以选择直接接入,修改部署 FastGPT 的 docker-compose.yml 文件,在其中将 AI Proxy 的使用注释,采用和 OneAPI 的类似配置。注释掉 AIProxy 相关代码,在OPENAI_BASE_URL中加入自己的 Ollama 开放地址,默认是http://地址:端口/v1,强调:v1必须填写。在KEY中随便填入,因为 Ollama 默认没有鉴权,如果开启鉴权,请自行填写。其他操作和在 OneAPI 中加入 Ollama 一致,只需在 FastGPT 中加入自己的模型即可使用。此处演示采用的是 Docker 部署 Ollama 的效果,主机 Ollama需要修改代理地址为http://<主机IP>:<端口>

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

完成后[点击这里](#5-模型添加和使用)进行模型添加并使用。

|

||||||

|

|

||||||

|

### 5. 模型添加和使用

|

||||||

|

|

||||||

|

在 FastGPT 中点击账号->模型提供商->模型配置->新增模型,添加自己的模型即可,添加模型时需要保证模型ID和 OneAPI 中的模型名称一致。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

在工作台中创建一个应用,选择自己之前添加的模型,此处模型名称为自己当时设置的别名。注:同一个模型无法多次添加,系统会采取最新添加时设置的别名。

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### 6. 补充

|

||||||

|

上述接入 Ollama 的代理地址中,主机安装 Ollama 的地址为“http://<主机IP>:<端口>”,容器部署 Ollama 地址为“http://<容器名>:<端口>”

|

||||||

@@ -56,7 +56,7 @@ weight: 707

|

|||||||

|

|

||||||

### zilliz cloud版本

|

### zilliz cloud版本

|

||||||

|

|

||||||

Milvus 的全托管服务,性能优于 Milvus 并提供 SLA,点击使用 [Zilliz Cloud](https://zilliz.com.cn/)。

|

Zilliz Cloud 由 Milvus 原厂打造,是全托管的 SaaS 向量数据库服务,性能优于 Milvus 并提供 SLA,点击使用 [Zilliz Cloud](https://zilliz.com.cn/)。

|

||||||

|

|

||||||

由于向量库使用了 Cloud,无需占用本地资源,无需太关注。

|

由于向量库使用了 Cloud,无需占用本地资源,无需太关注。

|

||||||

|

|

||||||

|

|||||||

@@ -29,7 +29,7 @@ weight: 744

|

|||||||

|

|

||||||

{{% alert icon=" " context="info" %}}

|

{{% alert icon=" " context="info" %}}

|

||||||

- [SiliconCloud(硅基流动)](https://cloud.siliconflow.cn/i/TR9Ym0c4): 提供开源模型调用的平台。

|

- [SiliconCloud(硅基流动)](https://cloud.siliconflow.cn/i/TR9Ym0c4): 提供开源模型调用的平台。

|

||||||

- [Sealos AIProxy](https://cloud.sealos.run/?uid=fnWRt09fZP&openapp=system-aiproxy): 提供国内各家模型代理,无需逐一申请 api。

|

- [Sealos AIProxy](https://hzh.sealos.run/?uid=fnWRt09fZP&openapp=system-aiproxy): 提供国内各家模型代理,无需逐一申请 api。

|

||||||

{{% /alert %}}

|

{{% /alert %}}

|

||||||

|

|

||||||

在 OneAPI 配置好模型后,你就可以打开 FastGPT 页面,启用对应模型了。

|

在 OneAPI 配置好模型后,你就可以打开 FastGPT 页面,启用对应模型了。

|

||||||

|

|||||||

@@ -23,7 +23,7 @@ FastGPT 目前采用模型分离的部署方案,FastGPT 中只兼容 OpenAI

|

|||||||

### Sealos 版本

|

### Sealos 版本

|

||||||

|

|

||||||

* 北京区: [点击部署 OneAPI](https://hzh.sealos.run/?openapp=system-template%3FtemplateName%3Done-api)

|

* 北京区: [点击部署 OneAPI](https://hzh.sealos.run/?openapp=system-template%3FtemplateName%3Done-api)

|

||||||

* 新加坡区(可用 GPT) [点击部署 OneAPI](https://cloud.sealos.io/?openapp=system-template%3FtemplateName%3Done-api)

|

* 新加坡区(可用 GPT) [点击部署 OneAPI](https://cloud.sealos.io/?openapp=system-template%3FtemplateName%3Done-api&uid=fnWRt09fZP)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|||||||

100

docSite/content/zh-cn/docs/development/modelConfig/ppio.md

Normal file

@@ -0,0 +1,100 @@

|

|||||||

|

---

|

||||||

|

title: '通过 PPIO LLM API 接入模型'

|

||||||

|

description: '通过 PPIO LLM API 接入模型'

|

||||||

|

icon: 'api'

|

||||||

|

draft: false

|

||||||

|

toc: true

|

||||||

|

weight: 747

|

||||||

|

---

|

||||||

|

|

||||||

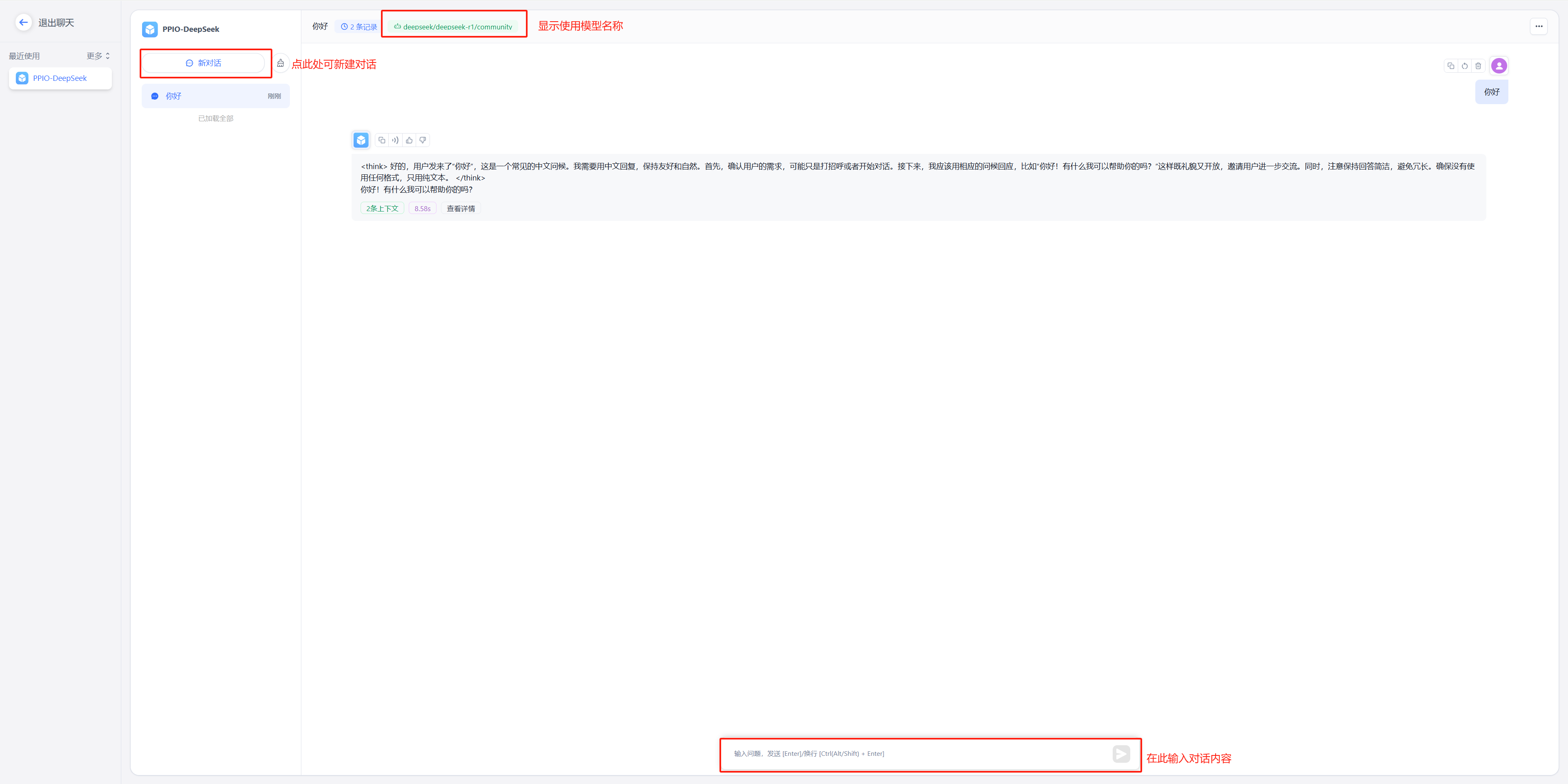

|

FastGPT 还可以通过 PPIO LLM API 接入模型。

|

||||||

|

{{% alert context="warning" %}}

|

||||||

|

以下内容搬运自 [FastGPT 接入 PPIO LLM API](https://ppinfra.com/docs/third-party/fastgpt-use),可能会有更新不及时的情况。

|

||||||

|

{{% /alert %}}

|

||||||

|

|

||||||

|

FastGPT 是一个将 AI 开发、部署和使用全流程简化为可视化操作的平台。它使开发者不需要深入研究算法,

|

||||||

|

用户也不需要掌握复杂技术,通过一站式服务将人工智能技术变成易于使用的工具。

|

||||||

|

|

||||||

|

PPIO 派欧云提供简单易用的 API 接口,让开发者能够轻松调用 DeepSeek 等模型。

|

||||||

|

|

||||||

|

- 对开发者:无需重构架构,3 个接口完成从文本生成到决策推理的全场景接入,像搭积木一样设计 AI 工作流;

|

||||||

|

- 对生态:自动适配从中小应用到企业级系统的资源需求,让智能随业务自然生长。

|

||||||

|

|

||||||

|

下方教程提供完整接入方案(含密钥配置),帮助您快速将 FastGPT 与 PPIO API 连接起来。

|

||||||

|

|

||||||

|

## 1. 配置前置条件

|

||||||

|

|

||||||

|

(1) 获取 API 接口地址

|

||||||

|

|

||||||

|

固定为: `https://api.ppinfra.com/v3/openai/chat/completions`。

|

||||||

|

|

||||||

|

(2) 获取 【API 密钥】

|

||||||

|

|

||||||

|

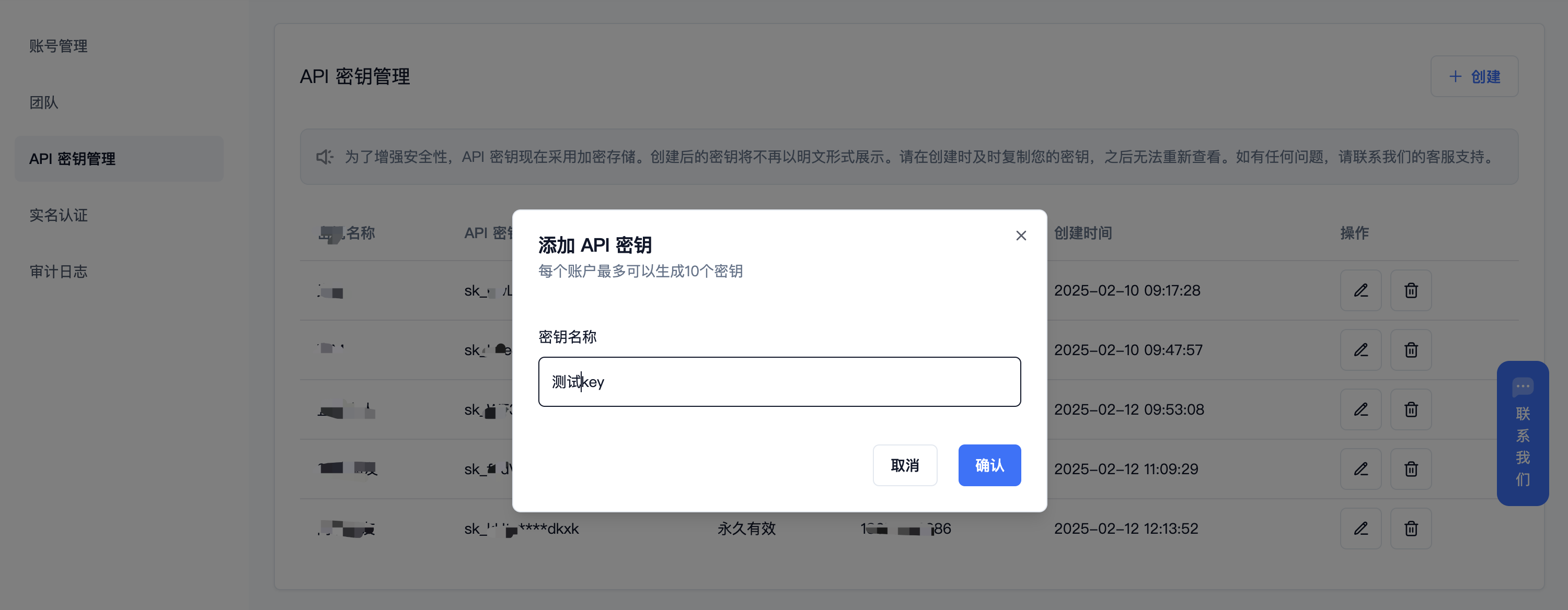

登录派欧云控制台 [API 秘钥管理](https://www.ppinfra.com/settings/key-management) 页面,点击创建按钮。

|

||||||

|

注册账号填写邀请码【VOJL20】得 50 代金券

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

(3) 生成并保存 【API 密钥】

|

||||||

|

{{% alert context="warning" %}}

|

||||||

|

秘钥在服务端是加密存储,请在生成时保存好秘钥;若遗失可以在控制台上删除并创建一个新的秘钥。

|

||||||

|

{{% /alert %}}

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

(4) 获取需要使用的模型 ID

|

||||||

|

|

||||||

|

deepseek 系列:

|

||||||

|

|

||||||

|

- DeepSeek R1:deepseek/deepseek-r1/community

|

||||||

|

|

||||||

|

- DeepSeek V3:deepseek/deepseek-v3/community

|

||||||

|

|

||||||

|

其他模型 ID、最大上下文及价格可参考:[模型列表](https://ppinfra.com/model-api/pricing)

|

||||||

|

|

||||||

|

## 2. 部署最新版 FastGPT 到本地环境

|

||||||

|

{{% alert context="warning" %}}

|

||||||

|

请使用 v4.8.22 以上版本,部署参考: https://doc.tryfastgpt.ai/docs/development/intro/

|

||||||

|

{{% /alert %}}

|

||||||

|

|

||||||

|

## 3. 模型配置(下面两种方式二选其一)

|

||||||

|

|

||||||

|

(1)通过 OneAPI 接入模型 PPIO 模型: 参考 OneAPI 使用文档,修改 FastGPT 的环境变量 在 One API 生成令牌后,FastGPT 可以通过修改 baseurl 和 key 去请求到 One API,再由 One API 去请求不同的模型。修改下面两个环境变量: 务必写上 v1。如果在同一个网络内,可改成内网地址。

|

||||||

|

|

||||||

|

OPENAI_BASE_URL= http://OneAPI-IP:OneAPI-PORT/v1

|

||||||

|

|

||||||

|

下面的 key 是由 One API 提供的令牌 CHAT_API_KEY=sk-UyVQcpQWMU7ChTVl74B562C28e3c46Fe8f16E6D8AeF8736e

|

||||||

|

|

||||||

|

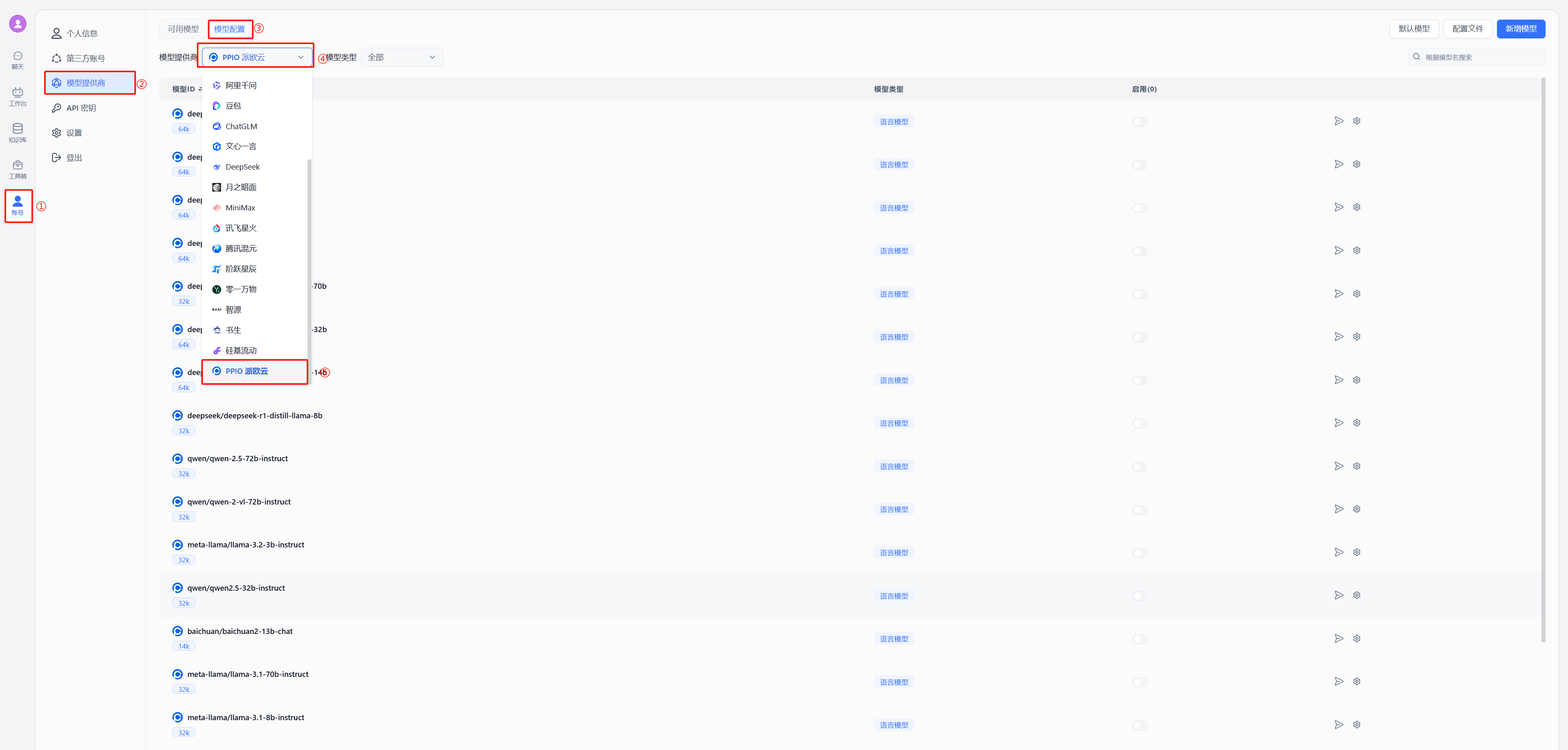

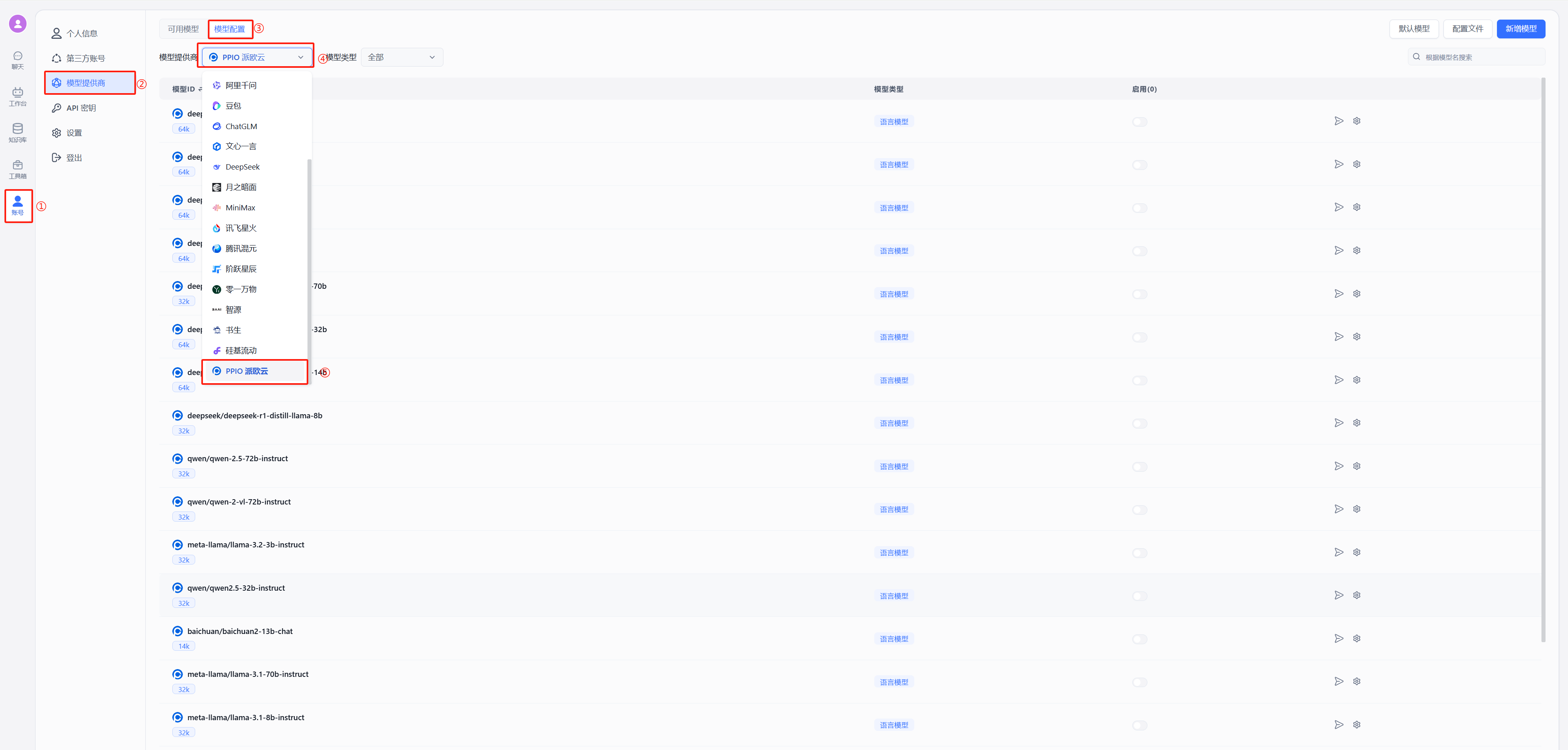

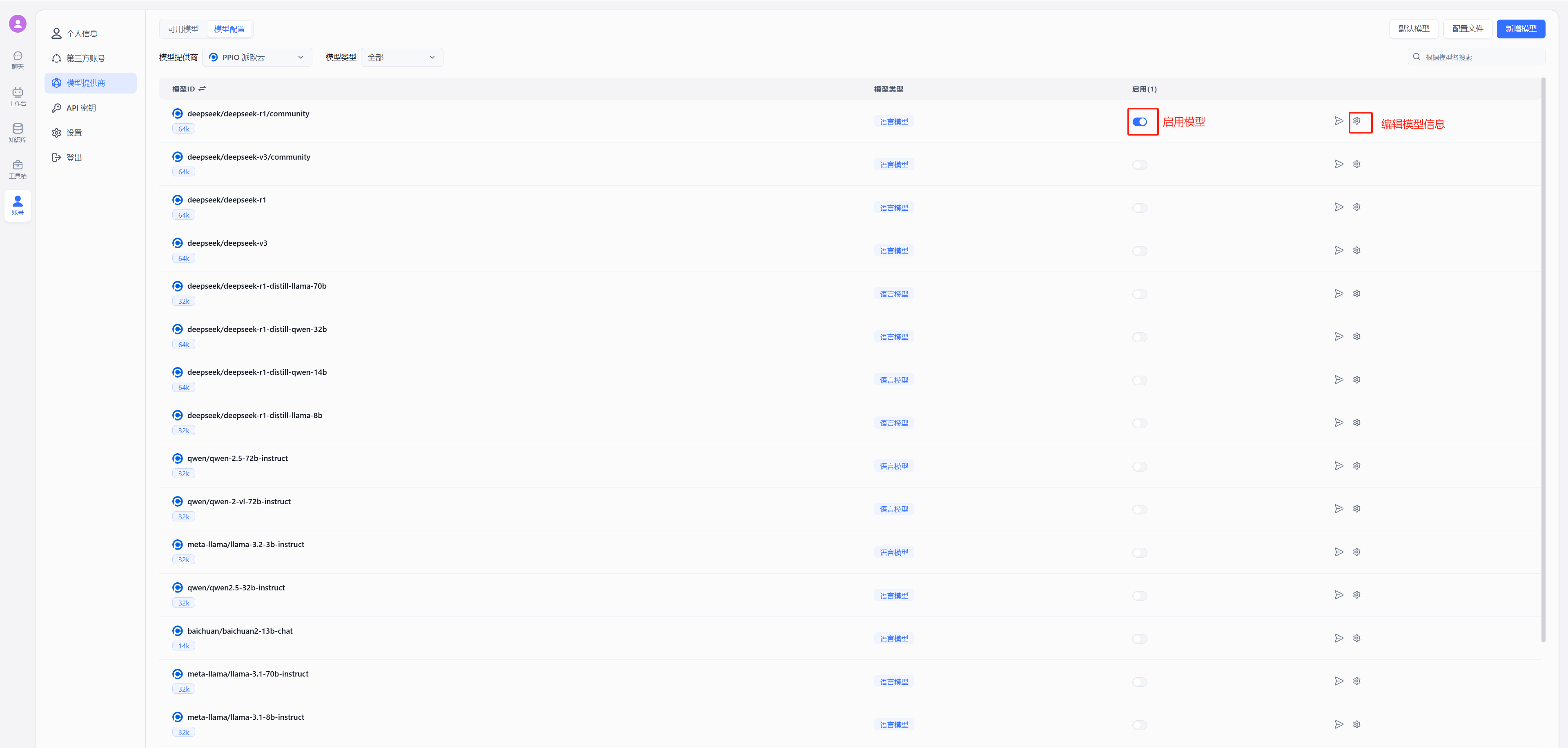

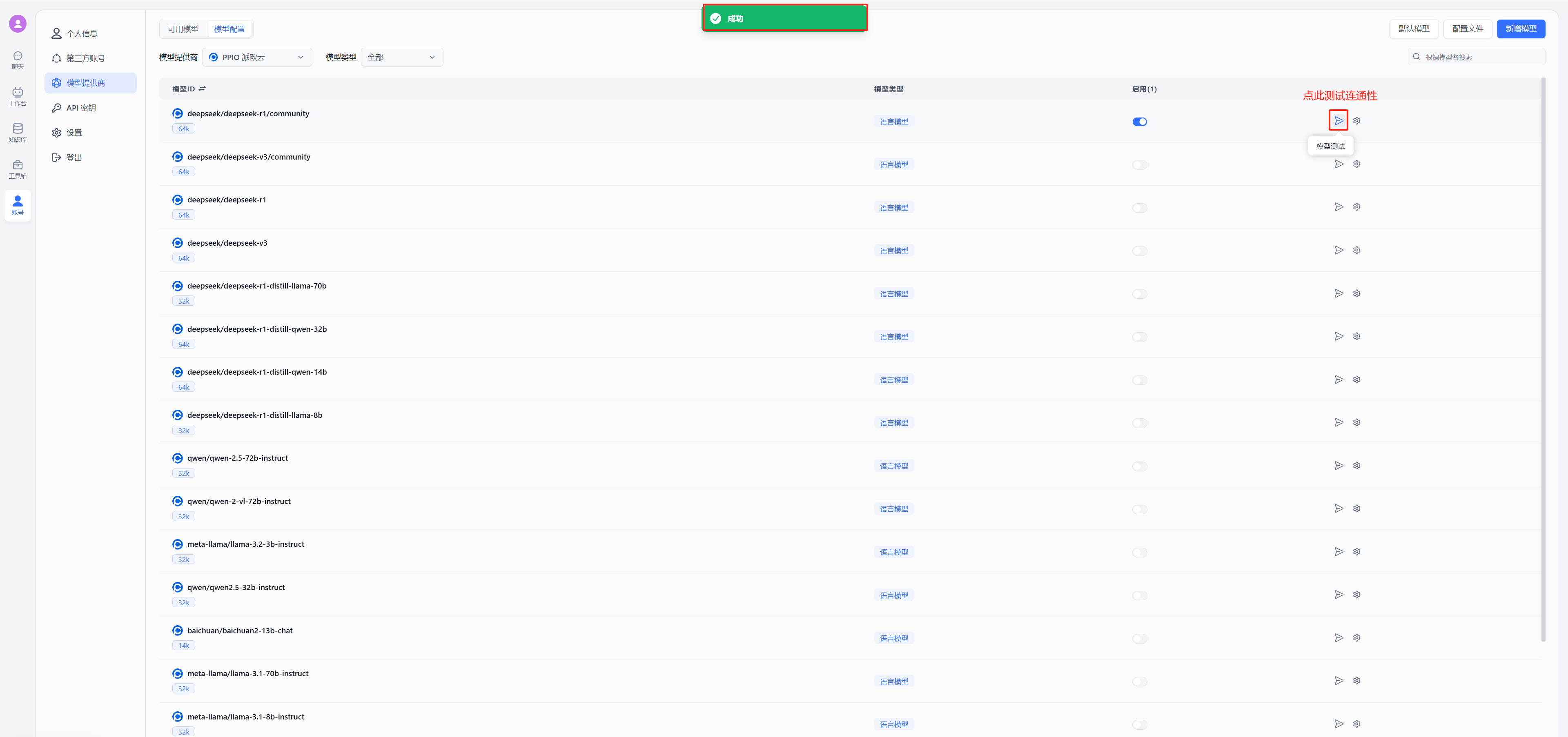

- 修改后重启 FastGPT,按下图在模型提供商中选择派欧云

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

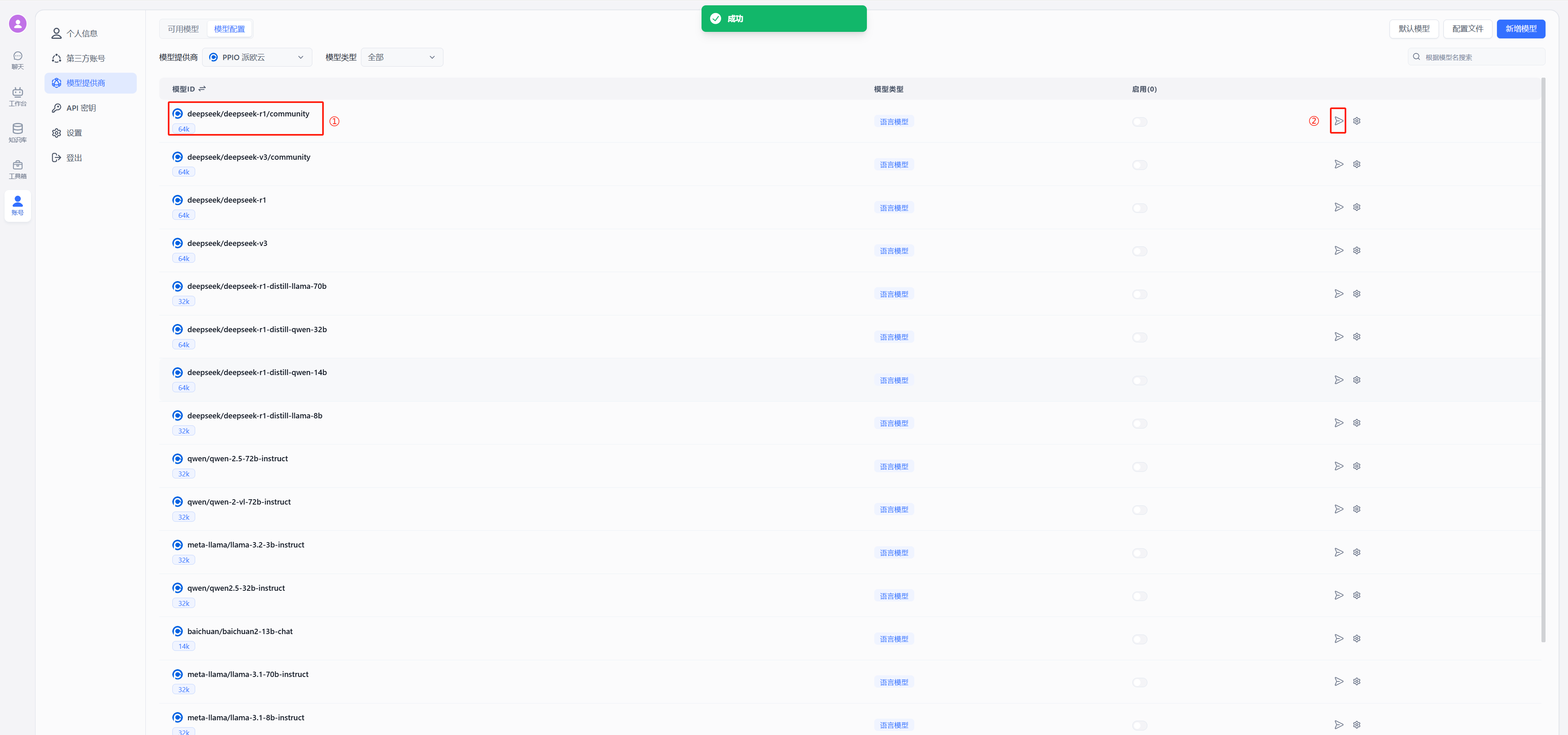

- 测试连通性

|

||||||

|

以 deepseek 为例,在模型中选择使用 deepseek/deepseek-r1/community,点击图中②的位置进行连通性测试,出现图中绿色的的成功显示证明连通成功,可以进行后续的配置对话了

|

||||||

|

|

||||||

|

|

||||||

|

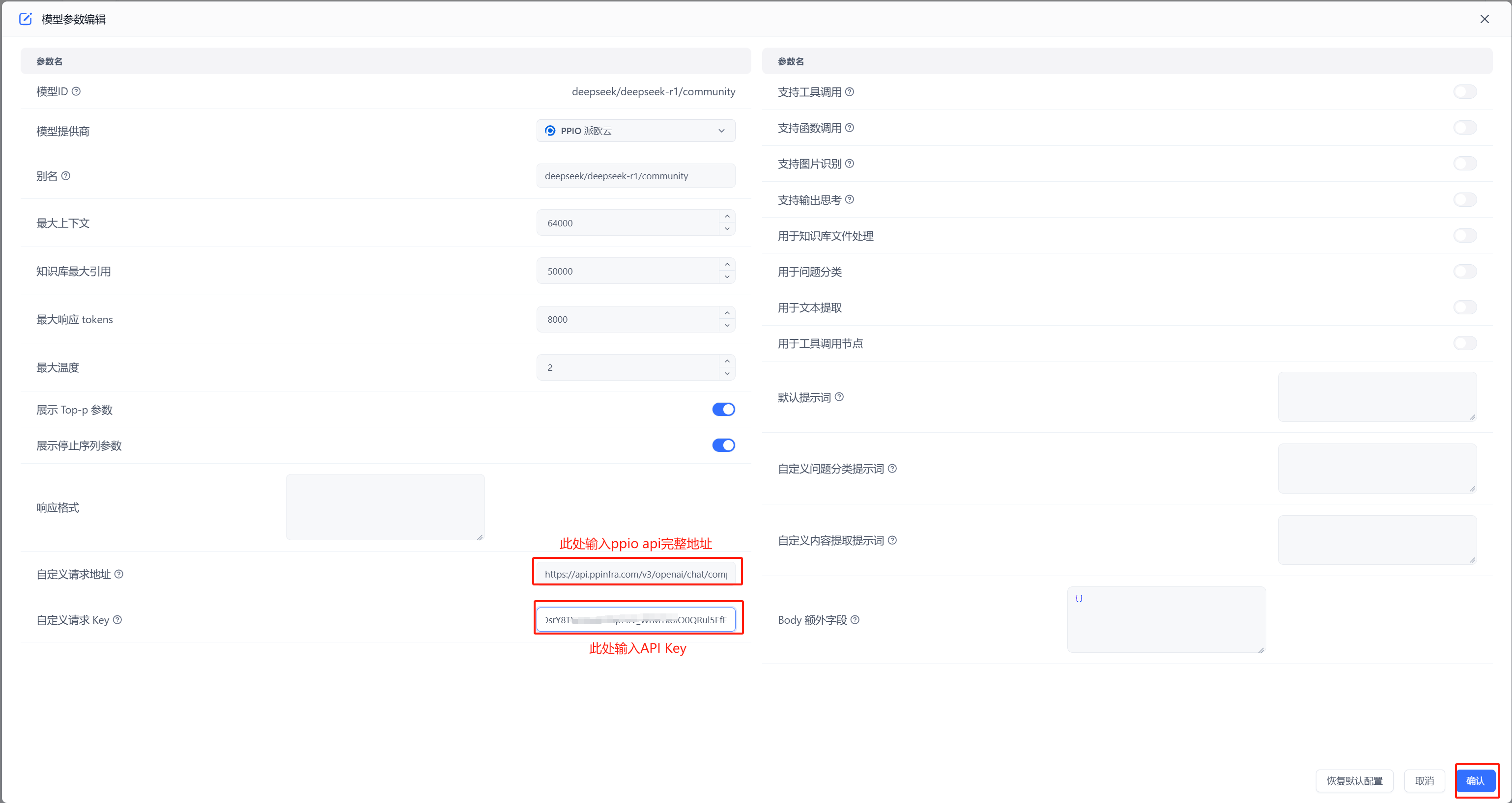

(2)不使用 OneAPI 接入 PPIO 模型

|

||||||

|

|

||||||

|

按照下图在模型提供商中选择派欧云

|

||||||

|

|

||||||

|

|

||||||

|

- 配置模型 自定义请求地址中输入:`https://api.ppinfra.com/v3/openai/chat/completions`

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

- 测试连通性

|

||||||

|

|

||||||

|

|

||||||

|

出现图中绿色的的成功显示证明连通成功,可以进行对话配置

|

||||||

|

|

||||||

|

## 4. 配置对话

|

||||||

|

(1)新建工作台

|

||||||

|

|

||||||

|

(2)开始聊天

|

||||||

|

|

||||||

|

|

||||||

|

## PPIO 全新福利重磅来袭 🔥

|

||||||

|

顺利完成教程配置步骤后,您将解锁两大权益:1. 畅享 PPIO 高速通道与 FastGPT 的效能组合;2.立即激活 **「新用户邀请奖励」** ————通过专属邀请码邀好友注册,您与好友可各领 50 元代金券,硬核福利助力 AI 工具效率倍增!

|

||||||

|

|

||||||

|

🎁 新手专享:立即使用邀请码【VOJL20】完成注册,50 元代金券奖励即刻到账!

|

||||||

@@ -11,8 +11,6 @@ weight: 853

|

|||||||

| --------------------- | --------------------- |

|

| --------------------- | --------------------- |

|

||||||

|  |  |

|

|  |  |

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

## 创建训练订单

|

## 创建训练订单

|

||||||

|

|

||||||

{{< tabs tabTotal="2" >}}

|

{{< tabs tabTotal="2" >}}

|

||||||

@@ -289,7 +287,7 @@ curl --location --request DELETE 'http://localhost:3000/api/core/dataset/delete?

|

|||||||

|

|

||||||

## 集合

|

## 集合

|

||||||

|

|

||||||

### 通用创建参数说明

|

### 通用创建参数说明(必看)

|

||||||

|

|

||||||

**入参**

|

**入参**

|

||||||

|

|

||||||

@@ -300,8 +298,11 @@ curl --location --request DELETE 'http://localhost:3000/api/core/dataset/delete?

|

|||||||

| trainingType | 数据处理方式。chunk: 按文本长度进行分割;qa: 问答对提取 | ✅ |

|

| trainingType | 数据处理方式。chunk: 按文本长度进行分割;qa: 问答对提取 | ✅ |

|

||||||

| autoIndexes | 是否自动生成索引(仅商业版支持) | |

|

| autoIndexes | 是否自动生成索引(仅商业版支持) | |

|

||||||

| imageIndex | 是否自动生成图片索引(仅商业版支持) | |

|

| imageIndex | 是否自动生成图片索引(仅商业版支持) | |

|

||||||

| chunkSize | 预估块大小 | |

|

| chunkSettingMode | 分块参数模式。auto: 系统默认参数; custom: 手动指定参数 | |

|

||||||

| chunkSplitter | 自定义最高优先分割符号 | |

|

| chunkSplitMode | 分块拆分模式。size: 按长度拆分; char: 按字符拆分。chunkSettingMode=auto时不生效。 | |

|

||||||

|

| chunkSize | 分块大小,默认 1500。chunkSettingMode=auto时不生效。 | |

|

||||||

|

| indexSize | 索引大小,默认 512,必须小于索引模型最大token。chunkSettingMode=auto时不生效。 | |

|

||||||

|

| chunkSplitter | 自定义最高优先分割符号,除非超出文件处理最大上下文,否则不会进行进一步拆分。chunkSettingMode=auto时不生效。 | |

|

||||||

| qaPrompt | qa拆分提示词 | |

|

| qaPrompt | qa拆分提示词 | |

|

||||||

| tags | 集合标签(字符串数组) | |

|

| tags | 集合标签(字符串数组) | |

|

||||||

| createTime | 文件创建时间(Date / String) | |

|

| createTime | 文件创建时间(Date / String) | |

|

||||||

@@ -389,9 +390,8 @@ curl --location --request POST 'http://localhost:3000/api/core/dataset/collectio

|

|||||||

"name":"测试训练",

|

"name":"测试训练",

|

||||||

|

|

||||||

"trainingType": "qa",

|

"trainingType": "qa",

|

||||||

"chunkSize":8000,

|

"chunkSettingMode": "auto",

|

||||||

"chunkSplitter":"",

|

"qaPrompt":"",

|

||||||

"qaPrompt":"11",

|

|

||||||

|

|

||||||

"metadata":{}

|

"metadata":{}

|

||||||

}'

|

}'

|

||||||

@@ -409,10 +409,6 @@ curl --location --request POST 'http://localhost:3000/api/core/dataset/collectio

|

|||||||

- parentId: 父级ID,不填则默认为根目录

|

- parentId: 父级ID,不填则默认为根目录

|

||||||

- name: 集合名称(必填)

|

- name: 集合名称(必填)

|

||||||

- metadata: 元数据(暂时没啥用)

|

- metadata: 元数据(暂时没啥用)

|

||||||

- trainingType: 训练模式(必填)

|

|

||||||

- chunkSize: 每个 chunk 的长度(可选). chunk模式:100~3000; qa模式: 4000~模型最大token(16k模型通常建议不超过10000)

|

|

||||||

- chunkSplitter: 自定义最高优先分割符号(可选)

|

|

||||||

- qaPrompt: qa拆分自定义提示词(可选)

|

|

||||||

{{% /alert %}}

|

{{% /alert %}}

|

||||||

|

|

||||||

{{< /markdownify >}}

|

{{< /markdownify >}}

|

||||||

@@ -462,8 +458,7 @@ curl --location --request POST 'http://localhost:3000/api/core/dataset/collectio

|

|||||||

"parentId": null,

|

"parentId": null,

|

||||||

|

|

||||||

"trainingType": "chunk",

|

"trainingType": "chunk",

|

||||||

"chunkSize":512,

|

"chunkSettingMode": "auto",

|

||||||

"chunkSplitter":"",

|

|

||||||

"qaPrompt":"",

|

"qaPrompt":"",

|

||||||

|

|

||||||

"metadata":{

|

"metadata":{

|

||||||

@@ -483,10 +478,6 @@ curl --location --request POST 'http://localhost:3000/api/core/dataset/collectio

|

|||||||

- datasetId: 知识库的ID(必填)

|

- datasetId: 知识库的ID(必填)

|

||||||

- parentId: 父级ID,不填则默认为根目录

|

- parentId: 父级ID,不填则默认为根目录

|

||||||

- metadata.webPageSelector: 网页选择器,用于指定网页中的哪个元素作为文本(可选)

|

- metadata.webPageSelector: 网页选择器,用于指定网页中的哪个元素作为文本(可选)

|

||||||

- trainingType:训练模式(必填)

|

|

||||||

- chunkSize: 每个 chunk 的长度(可选). chunk模式:100~3000; qa模式: 4000~模型最大token(16k模型通常建议不超过10000)

|

|

||||||

- chunkSplitter: 自定义最高优先分割符号(可选)

|

|

||||||

- qaPrompt: qa拆分自定义提示词(可选)

|

|

||||||

{{% /alert %}}

|

{{% /alert %}}

|

||||||

|

|

||||||

{{< /markdownify >}}

|

{{< /markdownify >}}

|

||||||

@@ -545,13 +536,7 @@ curl --location --request POST 'http://localhost:3000/api/core/dataset/collectio

|

|||||||

|

|

||||||

{{% alert icon=" " context="success" %}}

|

{{% alert icon=" " context="success" %}}

|

||||||

- file: 文件

|

- file: 文件

|

||||||

- data: 知识库相关信息(json序列化后传入)

|

- data: 知识库相关信息(json序列化后传入),参数说明见上方“通用创建参数说明”

|

||||||

- datasetId: 知识库的ID(必填)

|

|

||||||

- parentId: 父级ID,不填则默认为根目录

|

|

||||||

- trainingType:训练模式(必填)

|

|

||||||

- chunkSize: 每个 chunk 的长度(可选). chunk模式:100~3000; qa模式: 4000~模型最大token(16k模型通常建议不超过10000)

|

|

||||||

- chunkSplitter: 自定义最高优先分割符号(可选)

|

|

||||||

- qaPrompt: qa拆分自定义提示词(可选)

|

|

||||||

{{% /alert %}}

|

{{% /alert %}}

|

||||||

|

|

||||||

{{< /markdownify >}}

|

{{< /markdownify >}}

|

||||||

@@ -1063,10 +1048,12 @@ curl --location --request DELETE 'http://localhost:3000/api/core/dataset/collect

|

|||||||

|

|

||||||

| 字段 | 类型 | 说明 | 必填 |

|

| 字段 | 类型 | 说明 | 必填 |

|

||||||

| --- | --- | --- | --- |

|

| --- | --- | --- | --- |

|

||||||

| defaultIndex | Boolean | 是否为默认索引 | ✅ |

|

| type | String | 可选索引类型:default-默认索引; custom-自定义索引; summary-总结索引; question-问题索引; image-图片索引 | |

|

||||||

| dataId | String | 关联的向量ID | ✅ |

|

| dataId | String | 关联的向量ID,变更数据时候传入该 ID,会进行差量更新,而不是全量更新 | |

|

||||||

| text | String | 文本内容 | ✅ |

|

| text | String | 文本内容 | ✅ |

|

||||||

|

|

||||||

|

`type` 不填则默认为 `custom` 索引,还会基于 q/a 组成一个默认索引。如果传入了默认索引,则不会额外创建。

|

||||||

|

|

||||||

### 为集合批量添加添加数据

|

### 为集合批量添加添加数据

|

||||||

|

|

||||||

注意,每次最多推送 200 组数据。

|

注意,每次最多推送 200 组数据。

|

||||||

@@ -1298,8 +1285,7 @@ curl --location --request GET 'http://localhost:3000/api/core/dataset/data/detai

|

|||||||

"chunkIndex": 0,

|

"chunkIndex": 0,

|

||||||

"indexes": [

|

"indexes": [

|

||||||

{

|

{

|

||||||

"defaultIndex": true,

|

"type": "default",

|

||||||

"type": "chunk",

|

|

||||||

"dataId": "3720083",

|

"dataId": "3720083",

|

||||||

"text": "N o . 2 0 2 2 1 2中 国 信 息 通 信 研 究 院京东探索研究院2022年 9月人工智能生成内容(AIGC)白皮书(2022 年)版权声明本白皮书版权属于中国信息通信研究院和京东探索研究院,并受法律保护。转载、摘编或利用其它方式使用本白皮书文字或者观点的,应注明“来源:中国信息通信研究院和京东探索研究院”。违反上述声明者,编者将追究其相关法律责任。前 言习近平总书记曾指出,“数字技术正以新理念、新业态、新模式全面融入人类经济、政治、文化、社会、生态文明建设各领域和全过程”。在当前数字世界和物理世界加速融合的大背景下,人工智能生成内容(Artificial Intelligence Generated Content,简称 AIGC)正在悄然引导着一场深刻的变革,重塑甚至颠覆数字内容的生产方式和消费模式,将极大地丰富人们的数字生活,是未来全面迈向数字文明新时代不可或缺的支撑力量。",

|

"text": "N o . 2 0 2 2 1 2中 国 信 息 通 信 研 究 院京东探索研究院2022年 9月人工智能生成内容(AIGC)白皮书(2022 年)版权声明本白皮书版权属于中国信息通信研究院和京东探索研究院,并受法律保护。转载、摘编或利用其它方式使用本白皮书文字或者观点的,应注明“来源:中国信息通信研究院和京东探索研究院”。违反上述声明者,编者将追究其相关法律责任。前 言习近平总书记曾指出,“数字技术正以新理念、新业态、新模式全面融入人类经济、政治、文化、社会、生态文明建设各领域和全过程”。在当前数字世界和物理世界加速融合的大背景下,人工智能生成内容(Artificial Intelligence Generated Content,简称 AIGC)正在悄然引导着一场深刻的变革,重塑甚至颠覆数字内容的生产方式和消费模式,将极大地丰富人们的数字生活,是未来全面迈向数字文明新时代不可或缺的支撑力量。",

|

||||||

"_id": "65abd4b29d1448617cba61dc"

|

"_id": "65abd4b29d1448617cba61dc"

|

||||||

@@ -1335,12 +1321,18 @@ curl --location --request PUT 'http://localhost:3000/api/core/dataset/data/updat

|

|||||||

"a":"sss",

|

"a":"sss",

|

||||||

"indexes":[

|

"indexes":[

|

||||||

{

|

{

|

||||||

"dataId": "xxx",

|

"dataId": "xxxx",

|

||||||

"defaultIndex":false,

|

"type": "default",

|

||||||

"text":"自定义索引1"

|

"text": "默认索引"

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

"text":"修改后的自定义索引2。(会删除原来的自定义索引2,并插入新的自定义索引2)"

|

"dataId": "xxx",

|

||||||

|

"type": "custom",

|

||||||

|

"text": "旧的自定义索引1"

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"type":"custom",

|

||||||

|

"text":"新增的自定义索引"

|

||||||

}

|

}

|

||||||

]

|

]

|

||||||

}'

|

}'

|

||||||

|

|||||||

@@ -9,7 +9,7 @@ weight: 951

|

|||||||

|

|

||||||

## 登录 Sealos

|

## 登录 Sealos

|

||||||

|

|

||||||

[Sealos](https://cloud.sealos.io/)

|

[Sealos](https://cloud.sealos.io?uid=fnWRt09fZP)

|

||||||

|

|

||||||

## 创建应用

|

## 创建应用

|

||||||

|

|

||||||

|

|||||||

@@ -26,13 +26,13 @@ FastGPT 使用了 one-api 项目来管理模型池,其可以兼容 OpenAI 、A

|

|||||||

|

|

||||||

新加披区的服务器在国外,可以直接访问 OpenAI,但国内用户需要梯子才可以正常访问新加坡区。国际区价格稍贵,点击下面按键即可部署👇

|

新加披区的服务器在国外,可以直接访问 OpenAI,但国内用户需要梯子才可以正常访问新加坡区。国际区价格稍贵,点击下面按键即可部署👇

|

||||||

|

|

||||||

<a href="https://template.cloud.sealos.io/deploy?templateName=fastgpt" rel="external" target="_blank"><img src="https://cdn.jsdelivr.net/gh/labring-actions/templates@main/Deploy-on-Sealos.svg" alt="Deploy on Sealos"/></a>

|

<a href="https://template.cloud.sealos.io/deploy?templateName=fastgpt&uid=fnWRt09fZP" rel="external" target="_blank"><img src="https://cdn.jsdelivr.net/gh/labring-actions/templates@main/Deploy-on-Sealos.svg" alt="Deploy on Sealos"/></a>

|

||||||

|

|

||||||

### 北京区

|

### 北京区

|

||||||

|

|

||||||

北京区服务提供商为火山云,国内用户可以稳定访问,但无法访问 OpenAI 等境外服务,价格约为新加坡区的 1/4。点击下面按键即可部署👇

|

北京区服务提供商为火山云,国内用户可以稳定访问,但无法访问 OpenAI 等境外服务,价格约为新加坡区的 1/4。点击下面按键即可部署👇

|

||||||

|

|

||||||

<a href="https://bja.sealos.run/?openapp=system-template%3FtemplateName%3Dfastgpt" rel="external" target="_blank"><img src="https://raw.githubusercontent.com/labring-actions/templates/main/Deploy-on-Sealos.svg" alt="Deploy on Sealos"/></a>

|

<a href="https://bja.sealos.run/?openapp=system-template%3FtemplateName%3Dfastgpt&uid=fnWRt09fZP" rel="external" target="_blank"><img src="https://raw.githubusercontent.com/labring-actions/templates/main/Deploy-on-Sealos.svg" alt="Deploy on Sealos"/></a>

|

||||||

|

|

||||||

### 1. 开始部署

|

### 1. 开始部署

|

||||||

|

|

||||||

|

|||||||

@@ -13,7 +13,7 @@ FastGPT V4.5 引入 PgVector0.5 版本的 HNSW 索引,极大的提高了知识

|

|||||||

|

|

||||||

## PgVector升级:Sealos 部署方案

|

## PgVector升级:Sealos 部署方案

|

||||||

|

|

||||||

1. 点击[Sealos桌面](https://cloud.sealos.io)的数据库应用。

|

1. 点击[Sealos桌面](https://cloud.sealos.io?uid=fnWRt09fZP)的数据库应用。

|

||||||

2. 点击【pg】数据库的详情。

|

2. 点击【pg】数据库的详情。

|

||||||

3. 点击右上角的重启,等待重启完成。

|

3. 点击右上角的重启,等待重启完成。

|

||||||

4. 点击左侧的一键链接,等待打开 Terminal。

|

4. 点击左侧的一键链接,等待打开 Terminal。

|

||||||

|

|||||||

@@ -35,7 +35,7 @@ curl --location --request POST 'https://{{host}}/api/admin/initv4820' \

|

|||||||

|

|

||||||

## 完整更新内容

|

## 完整更新内容

|

||||||

|

|

||||||

1. 新增 - 可视化模型参数配置,取代原配置文件配置模型。预设超过 100 个模型配置。同时支持所有类型模型的一键测试。(预计下个版本会完全支持在页面上配置渠道)。

|

1. 新增 - 可视化模型参数配置,取代原配置文件配置模型。预设超过 100 个模型配置。同时支持所有类型模型的一键测试。(预计下个版本会完全支持在页面上配置渠道)。[点击查看模型配置方案](/docs/development/modelconfig/intro/)

|

||||||

2. 新增 - DeepSeek resoner 模型支持输出思考过程。

|

2. 新增 - DeepSeek resoner 模型支持输出思考过程。

|

||||||

3. 新增 - 使用记录导出和仪表盘。

|

3. 新增 - 使用记录导出和仪表盘。

|

||||||

4. 新增 - markdown 语法扩展,支持音视频(代码块 audio 和 video)。

|

4. 新增 - markdown 语法扩展,支持音视频(代码块 audio 和 video)。

|

||||||

|

|||||||

@@ -4,7 +4,7 @@ description: 'FastGPT V4.8.23 更新说明'

|

|||||||

icon: 'upgrade'

|

icon: 'upgrade'

|

||||||

draft: false

|

draft: false

|

||||||

toc: true

|

toc: true

|

||||||

weight: 802

|

weight: 801

|

||||||

---

|

---

|

||||||

|

|

||||||

## 更新指南

|

## 更新指南

|

||||||

|

|||||||

@@ -1,10 +1,10 @@

|

|||||||

---

|

---

|

||||||

title: 'V4.9.0(进行中)'

|

title: 'V4.9.0(包含升级脚本)'

|

||||||

description: 'FastGPT V4.9.0 更新说明'

|

description: 'FastGPT V4.9.0 更新说明'

|

||||||

icon: 'upgrade'

|

icon: 'upgrade'

|

||||||

draft: false

|

draft: false

|

||||||

toc: true

|

toc: true

|

||||||

weight: 801

|

weight: 800

|

||||||

---

|

---

|

||||||

|

|

||||||

|

|

||||||

@@ -12,9 +12,141 @@ weight: 801

|

|||||||

|

|

||||||

### 1. 做好数据库备份

|

### 1. 做好数据库备份

|

||||||

|

|

||||||

### 2. 更新镜像

|

### 2. 更新镜像和 PG 容器

|

||||||

|

|

||||||

### 3. 运行升级脚本

|

- 更新 FastGPT 镜像 tag: v4.9.0

|

||||||

|

- 更新 FastGPT 商业版镜像 tag: v4.9.0

|

||||||

|

- Sandbox 镜像,可以不更新

|

||||||

|

- 更新 PG 容器为 v0.8.0-pg15, 可以查看[最新的 yml](https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-pgvector.yml)

|

||||||

|

|

||||||

|

### 3. 替换 OneAPI(可选)

|

||||||

|

|

||||||

|

如果需要使用 [AI Proxy](https://github.com/labring/aiproxy) 替换 OneAPI 的用户可执行该步骤。

|

||||||

|

|

||||||

|

#### 1. 修改 yml 文件

|

||||||

|

|

||||||

|

参考[最新的 yml](https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-pgvector.yml) 文件。里面已移除 OneAPI 并添加了 AIProxy配置。包含一个服务和一个 PgSQL 数据库。将 `aiproxy` 的配置`追加`到 OneAPI 的配置后面(先不要删除 OneAPI,有一个初始化会自动同步 OneAPI 的配置)

|

||||||

|

|

||||||

|

{{% details title="AI Proxy Yml 配置" closed="true" %}}

|

||||||

|

|

||||||

|

```

|

||||||

|

# AI Proxy

|

||||||

|

aiproxy:

|

||||||

|

image: 'ghcr.io/labring/aiproxy:latest'

|

||||||

|

container_name: aiproxy

|

||||||

|

restart: unless-stopped

|

||||||

|

depends_on:

|

||||||

|

aiproxy_pg:

|

||||||

|

condition: service_healthy

|

||||||

|

networks:

|

||||||

|

- fastgpt

|

||||||

|

environment:

|

||||||

|

# 对应 fastgpt 里的AIPROXY_API_TOKEN

|

||||||

|

- ADMIN_KEY=aiproxy

|

||||||

|

# 错误日志详情保存时间(小时)

|

||||||

|

- LOG_DETAIL_STORAGE_HOURS=1

|

||||||

|

# 数据库连接地址

|

||||||

|

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

||||||

|

# 最大重试次数

|

||||||

|

- RETRY_TIMES=3

|

||||||

|

# 不需要计费

|

||||||

|

- BILLING_ENABLED=false

|

||||||

|

# 不需要严格检测模型

|

||||||

|

- DISABLE_MODEL_CONFIG=true

|

||||||

|

healthcheck:

|

||||||

|

test: ['CMD', 'curl', '-f', 'http://localhost:3000/api/status']

|

||||||

|

interval: 5s

|

||||||

|

timeout: 5s

|

||||||

|

retries: 10

|

||||||

|

aiproxy_pg:

|

||||||

|

image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

||||||

|

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

||||||

|

restart: unless-stopped

|

||||||

|

container_name: aiproxy_pg

|

||||||

|

volumes:

|

||||||

|

- ./aiproxy_pg:/var/lib/postgresql/data

|

||||||

|

networks:

|

||||||

|

- fastgpt

|

||||||

|

environment:

|

||||||

|

TZ: Asia/Shanghai

|

||||||

|

POSTGRES_USER: postgres

|

||||||

|

POSTGRES_DB: aiproxy

|

||||||

|

POSTGRES_PASSWORD: aiproxy

|

||||||

|

healthcheck:

|

||||||

|

test: ['CMD', 'pg_isready', '-U', 'postgres', '-d', 'aiproxy']

|

||||||

|

interval: 5s

|

||||||

|

timeout: 5s

|

||||||

|

retries: 10

|

||||||

|

```

|

||||||

|

|

||||||

|

{{% /details %}}

|

||||||

|

|

||||||

|

#### 2. 增加 FastGPT 环境变量:

|

||||||

|

|

||||||

|

修改 yml 文件中,fastgpt 容器的环境变量:

|

||||||

|

|

||||||

|

```

|

||||||

|

# AI Proxy 的地址,如果配了该地址,优先使用

|

||||||

|

- AIPROXY_API_ENDPOINT=http://aiproxy:3000

|

||||||

|

# AI Proxy 的 Admin Token,与 AI Proxy 中的环境变量 ADMIN_KEY

|

||||||

|

- AIPROXY_API_TOKEN=aiproxy

|

||||||

|

```

|

||||||

|

|

||||||

|

#### 3. 重载服务

|

||||||

|

|

||||||

|

`docker-compose down` 停止服务,然后 `docker-compose up -d` 启动服务,此时会追加 `aiproxy` 服务,并修改 FastGPT 的配置。

|

||||||

|

|

||||||

|

#### 4. 执行OneAPI迁移AI proxy脚本

|

||||||

|

|

||||||

|

- 可联网方案:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# 进入 aiproxy 容器

|

||||||

|

docker exec -it aiproxy sh

|

||||||

|

# 安装 curl

|

||||||

|

apk add curl

|

||||||

|

# 执行脚本

|

||||||

|

curl --location --request POST 'http://localhost:3000/api/channels/import/oneapi' \

|

||||||

|

--header 'Authorization: Bearer aiproxy' \

|

||||||

|

--header 'Content-Type: application/json' \

|

||||||

|

--data-raw '{

|

||||||

|

"dsn": "mysql://root:oneapimmysql@tcp(mysql:3306)/oneapi"

|

||||||

|

}'

|

||||||

|

# 返回 {"data":[],"success":true} 代表成功

|

||||||

|

```

|

||||||

|

|

||||||

|

- 无法联网时,可打开`aiproxy`的外网暴露端口,然后在本地执行脚本。

|

||||||

|

|

||||||

|

aiProxy 暴露端口:3003:3000,修改后重新 `docker-compose up -d` 启动服务。

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# 在终端执行脚本

|

||||||

|

curl --location --request POST 'http://localhost:3003/api/channels/import/oneapi' \

|

||||||

|

--header 'Authorization: Bearer aiproxy' \

|

||||||

|

--header 'Content-Type: application/json' \

|

||||||

|

--data-raw '{

|

||||||

|

"dsn": "mysql://root:oneapimmysql@tcp(mysql:3306)/oneapi"

|

||||||

|

}'

|

||||||

|

# 返回 {"data":[],"success":true} 代表成功

|

||||||

|

```

|

||||||

|

|

||||||

|

- 如果不熟悉 docker 操作,建议不要走脚本迁移,直接删除 OneAPI 所有内容,然后手动重新添加渠道。

|

||||||

|

|

||||||

|

#### 5. 进入 FastGPT 检查`AI Proxy` 服务是否正常启动。

|

||||||

|

|

||||||

|

登录 root 账号后,在`账号-模型提供商`页面,可以看到多出了`模型渠道`和`调用日志`两个选项,打开模型渠道,可以看到之前 OneAPI 的渠道,说明迁移完成,此时可以手动再检查下渠道是否正常。

|

||||||

|

|

||||||

|

#### 6. 删除 OneAPI 服务

|

||||||

|

|

||||||

|

```bash

|

||||||

|

# 停止服务,或者针对性停止 OneAPI 和其 Mysql

|

||||||

|

docker-compose down

|

||||||

|

# yml 文件中删除 OneAPI 和其 Mysql 依赖

|

||||||

|

# 重启服务

|

||||||