Compare commits

103 Commits

v4.9.1

...

v4.9.5-alp

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

16a22bc76a | ||

|

|

b51a87f5b7 | ||

|

|

bc1ca66b66 | ||

|

|

c9e12bb608 | ||

|

|

4e7fa29087 | ||

|

|

ec3bcfa124 | ||

|

|

199f454b6b | ||

|

|

80f41dd2a9 | ||

|

|

4343eecaaf | ||

|

|

c02864facc | ||

|

|

e4629a5c8c | ||

|

|

2dc3cb75fe | ||

|

|

431390fe42 | ||

|

|

1f5709eda6 | ||

|

|

86988e31d9 | ||

|

|

675e8ccedb | ||

|

|

9dfafb13bf | ||

|

|

f642c9603b | ||

|

|

5839325f77 | ||

|

|

73c997f7c5 | ||

|

|

ff92dced98 | ||

|

|

7a0747947c | ||

|

|

5ad383bc6e | ||

|

|

c85b719384 | ||

|

|

aeedc2fada | ||

|

|

be34b69f9b | ||

|

|

944774ec5f | ||

|

|

5b21b4b674 | ||

|

|

b0f0afabd2 | ||

|

|

d9aea53d13 | ||

|

|

73db92e4ad | ||

|

|

267cc5702c | ||

|

|

540f321fc9 | ||

|

|

a37c75159f | ||

|

|

0ed99d8c9a | ||

|

|

2d3ae7f944 | ||

|

|

565a966d19 | ||

|

|

8323c2d27e | ||

|

|

4f86a0591c | ||

|

|

14895bbcfd | ||

|

|

ccf9f5be2e | ||

|

|

e5b986b4de | ||

|

|

cf119a9f0f | ||

|

|

05b3062204 | ||

|

|

fed04f0b5d | ||

|

|

96aabdf579 | ||

|

|

e9f75c7e66 | ||

|

|

8b29aae238 | ||

|

|

8999dc5b8c | ||

|

|

17b20270e1 | ||

|

|

9d97b60561 | ||

|

|

f483832749 | ||

|

|

0778508908 | ||

|

|

f3a069bc80 | ||

|

|

2ebb2ccc9c | ||

|

|

484b87478c | ||

|

|

a17623d4ea | ||

|

|

dd2f7bdcfd | ||

|

|

4871a6980f | ||

|

|

64fb09146f | ||

|

|

1fdf947a13 | ||

|

|

ff64a3c039 | ||

|

|

37b4a1919b | ||

|

|

826a53dcb6 | ||

|

|

5a47af6fff | ||

|

|

6ea57e4609 | ||

|

|

2fcf421672 | ||

|

|

a680b565ea | ||

|

|

e812ad6e84 | ||

|

|

222ff0d49a | ||

|

|

2c73e9dc12 | ||

|

|

9918133426 | ||

|

|

8eec8566db | ||

|

|

6a4eada85b | ||

|

|

652ec45bbd | ||

|

|

6fee39873d | ||

|

|

87e90c37bd | ||

|

|

73451dbc64 | ||

|

|

077350e651 | ||

|

|

d52700c645 | ||

|

|

ec30d79286 | ||

|

|

cb29076e5b | ||

|

|

29a10c1389 | ||

|

|

28877373ac | ||

|

|

4538f2a9d4 | ||

|

|

fc23db745c | ||

|

|

8a68de6471 | ||

|

|

1c4e0c66d5 | ||

|

|

6dcdd540b9 | ||

|

|

48233c7d55 | ||

|

|

f3ef56998d | ||

|

|

7e7269b2ba | ||

|

|

606e9505c0 | ||

|

|

1db39e8907 | ||

|

|

7f13eb4642 | ||

|

|

9a1fff74fd | ||

|

|

de87639fce | ||

|

|

f9cecfd49a | ||

|

|

70563d2bcb | ||

|

|

4ca99a6361 | ||

|

|

8f70e436cf | ||

|

|

e75d81d05a | ||

|

|

56793114d8 |

@@ -1,4 +1,4 @@

|

||||

yangchuansheng/fastgpt-imgs:

|

||||

- source: docSite/assets/imgs/

|

||||

dest: imgs/

|

||||

deleteOrphaned: true

|

||||

deleteOrphaned: true

|

||||

30

.github/gh-bot.yml

vendored

@@ -1,30 +0,0 @@

|

||||

version: v1

|

||||

debug: true

|

||||

action:

|

||||

printConfig: false

|

||||

release:

|

||||

retry: 15s

|

||||

actionName: Release

|

||||

allowOps:

|

||||

- cuisongliu

|

||||

bot:

|

||||

prefix: /

|

||||

spe: _

|

||||

allowOps:

|

||||

- sealos-ci-robot

|

||||

- sealos-release-robot

|

||||

email: sealos-ci-robot@sealos.io

|

||||

username: sealos-ci-robot

|

||||

repo:

|

||||

org: false

|

||||

|

||||

message:

|

||||

success: |

|

||||

🤖 says: Hooray! The action {{.Body}} has been completed successfully. 🎉

|

||||

format_error: |

|

||||

🤖 says: ‼️ There is a formatting issue with the action, kindly verify the action's format.

|

||||

permission_error: |

|

||||

🤖 says: ‼️ The action doesn't have permission to trigger.

|

||||

release_error: |

|

||||

🤖 says: ‼️ Release action failed.

|

||||

Error details: {{.Error}}

|

||||

@@ -1,4 +1,4 @@

|

||||

name: Deploy doc image to vercel

|

||||

name: Deploy doc image to cf

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

@@ -20,6 +20,11 @@ jobs:

|

||||

# The type of runner that the job will run on

|

||||

runs-on: ubuntu-22.04

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

concurrency:

|

||||

group: ${{ github.workflow }}-${{ github.ref }}

|

||||

|

||||

# Job outputs

|

||||

outputs:

|

||||

docs: ${{ steps.filter.outputs.docs }}

|

||||

@@ -58,20 +63,9 @@ jobs:

|

||||

- name: Build

|

||||

run: cd docSite && hugo mod get -u github.com/colinwilson/lotusdocs@6d0568e && hugo -v --minify

|

||||

|

||||

# Step 5 - Push our generated site to Vercel

|

||||

- name: Deploy to Vercel

|

||||

uses: amondnet/vercel-action@v25

|

||||

id: vercel-action

|

||||

with:

|

||||

vercel-token: ${{ secrets.VERCEL_TOKEN }} # Required

|

||||

vercel-org-id: ${{ secrets.VERCEL_ORG_ID }} #Required

|

||||

vercel-project-id: ${{ secrets.VERCEL_PROJECT_ID }} #Required

|

||||

github-comment: false

|

||||

vercel-args: '--prod --local-config ../vercel.json' # Optional

|

||||

working-directory: docSite/public

|

||||

|

||||

- name: Deploy to GitHub Pages

|

||||

uses: peaceiris/actions-gh-pages@v3

|

||||

uses: peaceiris/actions-gh-pages@v4

|

||||

if: github.ref == 'refs/heads/main'

|

||||

with:

|

||||

github_token: ${{ secrets.GH_PAT }}

|

||||

github_token: ${{ secrets.GITHUB_TOKEN }}

|

||||

publish_dir: docSite/public

|

||||

19

.github/workflows/docs-deploy-kubeconfig.yml

vendored

@@ -10,6 +10,13 @@ on:

|

||||

jobs:

|

||||

build-fastgpt-docs-images:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

attestations: write

|

||||

id-token: write

|

||||

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

@@ -27,7 +34,6 @@ jobs:

|

||||

with:

|

||||

# list of Docker images to use as base name for tags

|

||||

images: |

|

||||

${{ secrets.DOCKER_HUB_NAME }}/fastgpt-docs

|

||||

ghcr.io/${{ github.repository_owner }}/fastgpt-docs

|

||||

registry.cn-hangzhou.aliyuncs.com/${{ secrets.ALI_HUB_USERNAME }}/fastgpt-docs

|

||||

tags: |

|

||||

@@ -40,18 +46,12 @@ jobs:

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

|

||||

- name: Login to DockerHub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKER_HUB_NAME }}

|

||||

password: ${{ secrets.DOCKER_HUB_PASSWORD }}

|

||||

|

||||

- name: Login to ghcr.io

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Login to Aliyun

|

||||

uses: docker/login-action@v3

|

||||

@@ -70,6 +70,7 @@ jobs:

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

outputs:

|

||||

tags: ${{ steps.datetime.outputs.datetime }}

|

||||

|

||||

update-docs-image:

|

||||

needs: build-fastgpt-docs-images

|

||||

runs-on: ubuntu-20.04

|

||||

|

||||

75

.github/workflows/docs-preview.yml

vendored

@@ -10,6 +10,12 @@ on:

|

||||

jobs:

|

||||

# This workflow contains jobs "deploy-production"

|

||||

deploy-preview:

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

attestations: write

|

||||

id-token: write

|

||||

pull-requests: write

|

||||

# The environment this job references

|

||||

environment:

|

||||

name: Preview

|

||||

@@ -32,6 +38,7 @@ jobs:

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

submodules: recursive # Fetch submodules

|

||||

fetch-depth: 0 # Fetch all history for .GitInfo and .Lastmod

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

# Step 2 Detect changes to Docs Content

|

||||

- name: Detect changes in doc content

|

||||

@@ -43,10 +50,6 @@ jobs:

|

||||

- 'docSite/content/docs/**'

|

||||

base: main

|

||||

|

||||

- name: Add cdn for images

|

||||

run: |

|

||||

sed -i "s#\](/imgs/#\](https://cdn.jsdelivr.net/gh/yangchuansheng/fastgpt-imgs@main/imgs/#g" $(grep -rl "\](/imgs/" docSite/content/zh-cn/docs)

|

||||

|

||||

# Step 3 - Install Hugo (specific version)

|

||||

- name: Install Hugo

|

||||

uses: peaceiris/actions-hugo@v2

|

||||

@@ -58,39 +61,35 @@ jobs:

|

||||

- name: Build

|

||||

run: cd docSite && hugo mod get -u github.com/colinwilson/lotusdocs@6d0568e && hugo -v --minify

|

||||

|

||||

# Step 5 - Push our generated site to Vercel

|

||||

- name: Deploy to Vercel

|

||||

uses: amondnet/vercel-action@v25

|

||||

id: vercel-action

|

||||

# Step 5 - Push our generated site to Cloudflare

|

||||

- name: Deploy to Cloudflare Pages

|

||||

id: deploy

|

||||

uses: cloudflare/wrangler-action@v3

|

||||

with:

|

||||

vercel-token: ${{ secrets.VERCEL_TOKEN }} # Required

|

||||

vercel-org-id: ${{ secrets.VERCEL_ORG_ID }} #Required

|

||||

vercel-project-id: ${{ secrets.VERCEL_PROJECT_ID }} #Required

|

||||

github-comment: false

|

||||

vercel-args: '--local-config ../vercel.json' # Optional

|

||||

working-directory: docSite/public

|

||||

alias-domains: | #Optional

|

||||

fastgpt-staging.vercel.app

|

||||

docsOutput:

|

||||

needs: [deploy-preview]

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

- name: Write md

|

||||

run: |

|

||||

echo "# 🤖 Generated by deploy action" > report.md

|

||||

echo "[👀 Visit Preview](${{ needs.deploy-preview.outputs.url }})" >> report.md

|

||||

cat report.md

|

||||

- name: Gh Rebot for Sealos

|

||||

uses: labring/gh-rebot@v0.0.6

|

||||

if: ${{ (github.event_name == 'pull_request_target') }}

|

||||

with:

|

||||

version: v0.0.6

|

||||

apiToken: ${{ secrets.CLOUDFLARE_API_TOKEN }}

|

||||

accountId: ${{ secrets.CLOUDFLARE_ACCOUNT_ID }}

|

||||

command: pages deploy ./docSite/public --project-name=fastgpt-doc

|

||||

packageManager: npm

|

||||

|

||||

- name: Create deployment status comment

|

||||

if: always()

|

||||

env:

|

||||

GH_TOKEN: '${{ secrets.GH_PAT }}'

|

||||

SEALOS_TYPE: 'pr_comment'

|

||||

SEALOS_FILENAME: 'report.md'

|

||||

SEALOS_REPLACE_TAG: 'DEFAULT_REPLACE_DEPLOY'

|

||||

JOB_STATUS: ${{ job.status }}

|

||||

PREVIEW_URL: ${{ steps.deploy.outputs.deployment-url }}

|

||||

uses: actions/github-script@v6

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

script: |

|

||||

const success = process.env.JOB_STATUS === 'success';

|

||||

const deploymentUrl = `${process.env.PREVIEW_URL}`;

|

||||

const status = success ? '✅ Success' : '❌ Failed';

|

||||

console.log(process.env.JOB_STATUS);

|

||||

|

||||

const commentBody = `**Deployment Status: ${status}**

|

||||

${success ? `🔗 Preview URL: ${deploymentUrl}` : ''}`;

|

||||

|

||||

await github.rest.issues.createComment({

|

||||

...context.repo,

|

||||

issue_number: context.payload.pull_request.number,

|

||||

body: commentBody

|

||||

});

|

||||

|

||||

11

.github/workflows/docs-sync_imgs.yml

vendored

@@ -1,6 +1,6 @@

|

||||

name: Sync images

|

||||

on:

|

||||

pull_request_target:

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

paths:

|

||||

@@ -15,13 +15,6 @@ jobs:

|

||||

sync:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

if: ${{ (github.event_name == 'pull_request_target') }}

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

|

||||

@@ -32,4 +25,4 @@ jobs:

|

||||

CONFIG_PATH: .github/sync_imgs.yml

|

||||

ORIGINAL_MESSAGE: true

|

||||

SKIP_PR: true

|

||||

COMMIT_EACH_FILE: false

|

||||

COMMIT_EACH_FILE: false

|

||||

|

||||

@@ -9,6 +9,11 @@ on:

|

||||

- 'main'

|

||||

jobs:

|

||||

build-fastgpt-images:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

if: github.repository != 'labring/FastGPT'

|

||||

steps:

|

||||

@@ -32,7 +37,7 @@ jobs:

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Set DOCKER_REPO_TAGGED based on branch or tag

|

||||

run: |

|

||||

echo "DOCKER_REPO_TAGGED=ghcr.io/${{ github.repository_owner }}/fastgpt:latest" >> $GITHUB_ENV

|

||||

|

||||

21

.github/workflows/fastgpt-build-image.yml

vendored

@@ -9,6 +9,11 @@ on:

|

||||

- 'v*'

|

||||

jobs:

|

||||

build-fastgpt-images:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

# install env

|

||||

@@ -39,7 +44,7 @@ jobs:

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Login to Ali Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

@@ -91,6 +96,11 @@ jobs:

|

||||

-t ${Docker_Hub_Latest} \

|

||||

.

|

||||

build-fastgpt-images-sub-route:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

# install env

|

||||

@@ -121,7 +131,7 @@ jobs:

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Login to Ali Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

@@ -174,6 +184,11 @@ jobs:

|

||||

-t ${Docker_Hub_Latest} \

|

||||

.

|

||||

build-fastgpt-images-sub-route-gchat:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

# install env

|

||||

@@ -204,7 +219,7 @@ jobs:

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Login to Ali Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

|

||||

40

.github/workflows/fastgpt-preview-image.yml

vendored

@@ -5,6 +5,13 @@ on:

|

||||

|

||||

jobs:

|

||||

preview-fastgpt-images:

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

attestations: write

|

||||

id-token: write

|

||||

pull-requests: write

|

||||

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

- name: Checkout

|

||||

@@ -12,8 +19,9 @@ jobs:

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

submodules: recursive # Fetch submodules

|

||||

fetch-depth: 0 # Fetch all history for .GitInfo and .Lastmod

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

with:

|

||||

@@ -25,15 +33,18 @@ jobs:

|

||||

key: ${{ runner.os }}-buildx-${{ github.sha }}

|

||||

restore-keys: |

|

||||

${{ runner.os }}-buildx-

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Set DOCKER_REPO_TAGGED based on branch or tag

|

||||

run: |

|

||||

echo "DOCKER_REPO_TAGGED=ghcr.io/${{ github.repository_owner }}/fastgpt-pr:${{ github.event.pull_request.head.sha }}" >> $GITHUB_ENV

|

||||

|

||||

- name: Build image for PR

|

||||

env:

|

||||

DOCKER_REPO_TAGGED: ${{ env.DOCKER_REPO_TAGGED }}

|

||||

@@ -48,20 +59,13 @@ jobs:

|

||||

--cache-to=type=local,dest=/tmp/.buildx-cache \

|

||||

-t ${DOCKER_REPO_TAGGED} \

|

||||

.

|

||||

# Add write md step after build

|

||||

- name: Write md

|

||||

run: |

|

||||

echo "# 🤖 Generated by deploy action" > report.md

|

||||

echo "📦 Preview Image: \`${DOCKER_REPO_TAGGED}\`" >> report.md

|

||||

cat report.md

|

||||

|

||||

- name: Gh Rebot for Sealos

|

||||

uses: labring/gh-rebot@v0.0.6

|

||||

if: ${{ (github.event_name == 'pull_request_target') }}

|

||||

- uses: actions/github-script@v7

|

||||

with:

|

||||

version: v0.0.6

|

||||

env:

|

||||

GH_TOKEN: '${{ secrets.GH_PAT }}'

|

||||

SEALOS_TYPE: 'pr_comment'

|

||||

SEALOS_FILENAME: 'report.md'

|

||||

SEALOS_REPLACE_TAG: 'DEFAULT_REPLACE_DEPLOY'

|

||||

github-token: ${{secrets.GITHUB_TOKEN}}

|

||||

script: |

|

||||

github.rest.issues.createComment({

|

||||

issue_number: context.issue.number,

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

body: 'Preview Image: `${{ env.DOCKER_REPO_TAGGED }}`'

|

||||

})

|

||||

|

||||

3

.github/workflows/fastgpt-test.yaml

vendored

@@ -15,6 +15,9 @@ jobs:

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

repository: ${{ github.event.pull_request.head.repo.full_name }}

|

||||

- uses: pnpm/action-setup@v4

|

||||

with:

|

||||

version: 10

|

||||

|

||||

7

.github/workflows/helm-release.yaml

vendored

@@ -8,6 +8,11 @@ on:

|

||||

|

||||

jobs:

|

||||

helm:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

- name: Checkout

|

||||

@@ -20,7 +25,7 @@ jobs:

|

||||

run: echo "tag=$(git describe --tags)" >> $GITHUB_OUTPUT

|

||||

- name: Release Helm

|

||||

run: |

|

||||

echo ${{ secrets.GH_PAT }} | helm registry login ghcr.io -u ${{ github.repository_owner }} --password-stdin

|

||||

echo ${{ secrets.GITHUB_TOKEN }} | helm registry login ghcr.io -u ${{ github.repository_owner }} --password-stdin

|

||||

export APP_VERSION=${{ steps.vars.outputs.tag }}

|

||||

export HELM_VERSION=${{ steps.vars.outputs.tag }}

|

||||

export HELM_REPO=ghcr.io/${{ github.repository_owner }}

|

||||

|

||||

7

.github/workflows/sandbox-build-image.yml

vendored

@@ -8,6 +8,11 @@ on:

|

||||

- 'v*'

|

||||

jobs:

|

||||

build-fastgpt-sandbox-images:

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

attestations: write

|

||||

id-token: write

|

||||

runs-on: ubuntu-20.04

|

||||

steps:

|

||||

# install env

|

||||

@@ -38,7 +43,7 @@ jobs:

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GH_PAT }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Login to Ali Hub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

|

||||

7

.vscode/i18n-ally-custom-framework.yml

vendored

@@ -17,15 +17,8 @@ usageMatchRegex:

|

||||

# you can ignore it and use your own matching rules as well

|

||||

- "[^\\w\\d]t\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]commonT\\(['\"`]({key})['\"`]"

|

||||

# 支持 appT("your.i18n.keys")

|

||||

- "[^\\w\\d]appT\\(['\"`]({key})['\"`]"

|

||||

# 支持 datasetT("your.i18n.keys")

|

||||

- "[^\\w\\d]datasetT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]fileT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]publishT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]workflowT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]userT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]chatT\\(['\"`]({key})['\"`]"

|

||||

- "[^\\w\\d]i18nT\\(['\"`]({key})['\"`]"

|

||||

|

||||

# A RegEx to set a custom scope range. This scope will be used as a prefix when detecting keys

|

||||

|

||||

39

.vscode/launch.json

vendored

Normal file

@@ -0,0 +1,39 @@

|

||||

{

|

||||

"version": "0.2.0",

|

||||

"configurations": [

|

||||

{

|

||||

"name": "Next.js: debug server-side",

|

||||

"type": "node-terminal",

|

||||

"request": "launch",

|

||||

"command": "pnpm run dev",

|

||||

"cwd": "${workspaceFolder}/projects/app"

|

||||

},

|

||||

{

|

||||

"name": "Next.js: debug client-side",

|

||||

"type": "chrome",

|

||||

"request": "launch",

|

||||

"url": "http://localhost:3000"

|

||||

},

|

||||

{

|

||||

"name": "Next.js: debug client-side (Edge)",

|

||||

"type": "msedge",

|

||||

"request": "launch",

|

||||

"url": "http://localhost:3000"

|

||||

},

|

||||

{

|

||||

"name": "Next.js: debug full stack",

|

||||

"type": "node-terminal",

|

||||

"request": "launch",

|

||||

"command": "pnpm run dev",

|

||||

"cwd": "${workspaceFolder}/projects/app",

|

||||

"skipFiles": ["<node_internals>/**"],

|

||||

"serverReadyAction": {

|

||||

"action": "debugWithEdge",

|

||||

"killOnServerStop": true,

|

||||

"pattern": "- Local:.+(https?://.+)",

|

||||

"uriFormat": "%s",

|

||||

"webRoot": "${workspaceFolder}/projects/app"

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

@@ -10,7 +10,7 @@

|

||||

<a href="./README_ja.md">日语</a>

|

||||

</p>

|

||||

|

||||

FastGPT 是一个基于 LLM 大语言模型的知识库问答系统,提供开箱即用的数据处理、模型调用等能力。同时可以通过 Flow 可视化进行工作流编排,从而实现复杂的问答场景!

|

||||

FastGPT 是一个 AI Agent 构建平台,提供开箱即用的数据处理、模型调用等能力,同时可以通过 Flow 可视化进行工作流编排,从而实现复杂的应用场景!

|

||||

|

||||

</div>

|

||||

|

||||

@@ -129,7 +129,7 @@ https://github.com/labring/FastGPT/assets/15308462/7d3a38df-eb0e-4388-9250-2409b

|

||||

</a>

|

||||

|

||||

## 🌿 第三方生态

|

||||

|

||||

- [PPIO 派欧云:一键调用高性价比的开源模型 API 和 GPU 容器](https://ppinfra.com/user/register?invited_by=VITYVU&utm_source=github_fastgpt)

|

||||

- [AI Proxy:国内模型聚合服务](https://sealos.run/aiproxy/?k=fastgpt-github/)

|

||||

- [SiliconCloud (硅基流动) —— 开源模型在线体验平台](https://cloud.siliconflow.cn/i/TR9Ym0c4)

|

||||

- [COW 个人微信/企微机器人](https://doc.tryfastgpt.ai/docs/use-cases/external-integration/onwechat/)

|

||||

|

||||

@@ -110,19 +110,31 @@ services:

|

||||

|

||||

# 等待docker-entrypoint.sh脚本执行的MongoDB服务进程

|

||||

wait $$!

|

||||

redis:

|

||||

image: redis:7.2-alpine

|

||||

container_name: redis

|

||||

# ports:

|

||||

# - 6379:6379

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

command: |

|

||||

redis-server --requirepass mypassword --loglevel warning --maxclients 10000 --appendonly yes --save 60 10 --maxmemory 4gb --maxmemory-policy noeviction

|

||||

volumes:

|

||||

- ./redis/data:/data

|

||||

|

||||

# fastgpt

|

||||

sandbox:

|

||||

container_name: sandbox

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.4 # 阿里云

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

fastgpt:

|

||||

container_name: fastgpt

|

||||

image: ghcr.io/labring/fastgpt:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.4 # 阿里云

|

||||

ports:

|

||||

- 3000:3000

|

||||

networks:

|

||||

@@ -157,6 +169,8 @@ services:

|

||||

# zilliz 连接参数

|

||||

- MILVUS_ADDRESS=http://milvusStandalone:19530

|

||||

- MILVUS_TOKEN=none

|

||||

# Redis 地址

|

||||

- REDIS_URL=redis://default:mypassword@redis:6379

|

||||

# sandbox 地址

|

||||

- SANDBOX_URL=http://sandbox:3000

|

||||

# 日志等级: debug, info, warn, error

|

||||

@@ -170,12 +184,15 @@ services:

|

||||

- ALLOWED_ORIGINS=

|

||||

# 是否开启IP限制,默认不开启

|

||||

- USE_IP_LIMIT=false

|

||||

# 对话文件过期天数

|

||||

- CHAT_FILE_EXPIRE_TIME=7

|

||||

volumes:

|

||||

- ./config.json:/app/data/config.json

|

||||

|

||||

# AI Proxy

|

||||

aiproxy:

|

||||

image: 'ghcr.io/labring/aiproxy:latest'

|

||||

image: ghcr.io/labring/aiproxy:v0.1.3

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||

container_name: aiproxy

|

||||

restart: unless-stopped

|

||||

depends_on:

|

||||

|

||||

202

deploy/docker/docker-compose-oceanbase/docker-compose.yml

Normal file

@@ -0,0 +1,202 @@

|

||||

# 数据库的默认账号和密码仅首次运行时设置有效

|

||||

# 如果修改了账号密码,记得改数据库和项目连接参数,别只改一处~

|

||||

# 该配置文件只是给快速启动,测试使用。正式使用,记得务必修改账号密码,以及调整合适的知识库参数,共享内存等。

|

||||

# 如何无法访问 dockerhub 和 git,可以用阿里云(阿里云没有arm包)

|

||||

|

||||

version: '3.3'

|

||||

services:

|

||||

# vector db

|

||||

ob:

|

||||

image: oceanbase/oceanbase-ce # docker hub

|

||||

# image: quay.io/oceanbase/oceanbase-ce:4.3.5.1-101000042025031818 # 镜像

|

||||

container_name: ob

|

||||

restart: always

|

||||

# ports: # 生产环境建议不要暴露

|

||||

# - 2881:2881

|

||||

networks:

|

||||

- fastgpt

|

||||

environment:

|

||||

# 这里的配置只有首次运行生效。修改后,重启镜像是不会生效的。需要把持久化数据删除再重启,才有效果

|

||||

- OB_SYS_PASSWORD=obsyspassword

|

||||

# 不同于传统数据库,OceanBase 数据库的账号包含更多字段,包括用户名、租户名和集群名。经典格式为“用户名@租户名#集群名”

|

||||

# 比如用mysql客户端连接时,根据本文件的默认配置,应该指定 “-uroot@tenantname”

|

||||

- OB_TENANT_NAME=tenantname

|

||||

- OB_TENANT_PASSWORD=tenantpassword

|

||||

# MODE分为MINI和NORMAL, 后者会最大程度使用主机资源

|

||||

- MODE=NORMAL

|

||||

- OB_SERVER_IP=127.0.0.1

|

||||

# 更多环境变量配置见oceanbase官方文档: https://www.oceanbase.com/docs/common-oceanbase-database-cn-1000000002013494

|

||||

volumes:

|

||||

- ./ob/data:/root/ob

|

||||

- ./ob/config:/root/.obd/cluster

|

||||

- ./init.sql:/root/boot/init.d/init.sql

|

||||

healthcheck:

|

||||

# obclient -h127.0.0.1 -P2881 -uroot@tenantname -ptenantpassword -e "SELECT 1;"

|

||||

test: ["CMD-SHELL", "obclient -h$OB_SERVER_IP -P2881 -uroot@$OB_TENANT_NAME -p$OB_TENANT_PASSWORD -e \"SELECT 1;\""]

|

||||

interval: 30s

|

||||

timeout: 10s

|

||||

retries: 1000

|

||||

start_period: 10s

|

||||

mongo:

|

||||

image: mongo:5.0.18 # dockerhub

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/mongo:5.0.18 # 阿里云

|

||||

# image: mongo:4.4.29 # cpu不支持AVX时候使用

|

||||

container_name: mongo

|

||||

restart: always

|

||||

# ports:

|

||||

# - 27017:27017

|

||||

networks:

|

||||

- fastgpt

|

||||

command: mongod --keyFile /data/mongodb.key --replSet rs0

|

||||

environment:

|

||||

- MONGO_INITDB_ROOT_USERNAME=myusername

|

||||

- MONGO_INITDB_ROOT_PASSWORD=mypassword

|

||||

volumes:

|

||||

- ./mongo/data:/data/db

|

||||

entrypoint:

|

||||

- bash

|

||||

- -c

|

||||

- |

|

||||

openssl rand -base64 128 > /data/mongodb.key

|

||||

chmod 400 /data/mongodb.key

|

||||

chown 999:999 /data/mongodb.key

|

||||

echo 'const isInited = rs.status().ok === 1

|

||||

if(!isInited){

|

||||

rs.initiate({

|

||||

_id: "rs0",

|

||||

members: [

|

||||

{ _id: 0, host: "mongo:27017" }

|

||||

]

|

||||

})

|

||||

}' > /data/initReplicaSet.js

|

||||

# 启动MongoDB服务

|

||||

exec docker-entrypoint.sh "$$@" &

|

||||

|

||||

# 等待MongoDB服务启动

|

||||

until mongo -u myusername -p mypassword --authenticationDatabase admin --eval "print('waited for connection')"; do

|

||||

echo "Waiting for MongoDB to start..."

|

||||

sleep 2

|

||||

done

|

||||

|

||||

# 执行初始化副本集的脚本

|

||||

mongo -u myusername -p mypassword --authenticationDatabase admin /data/initReplicaSet.js

|

||||

|

||||

# 等待docker-entrypoint.sh脚本执行的MongoDB服务进程

|

||||

wait $$!

|

||||

|

||||

# fastgpt

|

||||

sandbox:

|

||||

container_name: sandbox

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.3 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.3 # 阿里云

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

fastgpt:

|

||||

container_name: fastgpt

|

||||

image: ghcr.io/labring/fastgpt:v4.9.3 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.3 # 阿里云

|

||||

ports:

|

||||

- 3000:3000

|

||||

networks:

|

||||

- fastgpt

|

||||

depends_on:

|

||||

mongo:

|

||||

condition: service_started

|

||||

ob:

|

||||

condition: service_healthy

|

||||

sandbox:

|

||||

condition: service_started

|

||||

restart: always

|

||||

environment:

|

||||

# 前端外部可访问的地址,用于自动补全文件资源路径。例如 https:fastgpt.cn,不能填 localhost。这个值可以不填,不填则发给模型的图片会是一个相对路径,而不是全路径,模型可能伪造Host。

|

||||

- FE_DOMAIN=

|

||||

# root 密码,用户名为: root。如果需要修改 root 密码,直接修改这个环境变量,并重启即可。

|

||||

- DEFAULT_ROOT_PSW=1234

|

||||

# # AI Proxy 的地址,如果配了该地址,优先使用

|

||||

# - AIPROXY_API_ENDPOINT=http://aiproxy:3000

|

||||

# # AI Proxy 的 Admin Token,与 AI Proxy 中的环境变量 ADMIN_KEY

|

||||

# - AIPROXY_API_TOKEN=aiproxy

|

||||

# 模型中转地址(如果用了 AI Proxy,下面 2 个就不需要了,旧版 OneAPI 用户,使用下面的变量)

|

||||

- # openai 基本地址,可用作中转。

|

||||

- OPENAI_BASE_URL=https://dashscope.aliyuncs.com/compatible-mode/v1

|

||||

- # OpenAI API Key

|

||||

- CHAT_API_KEY=sk-8990fa15a34b464a805237cfe9561f11

|

||||

# 数据库最大连接数

|

||||

- DB_MAX_LINK=30

|

||||

# 登录凭证密钥

|

||||

- TOKEN_KEY=any

|

||||

# root的密钥,常用于升级时候的初始化请求

|

||||

- ROOT_KEY=root_key

|

||||

# 文件阅读加密

|

||||

- FILE_TOKEN_KEY=filetoken

|

||||

# MongoDB 连接参数. 用户名myusername,密码mypassword。

|

||||

- MONGODB_URI=mongodb://myusername:mypassword@mongo:27017/fastgpt?authSource=admin

|

||||

# OceanBase 向量库连接参数

|

||||

- OCEANBASE_URL=mysql://root%40tenantname:tenantpassword@ob:2881/test

|

||||

# sandbox 地址

|

||||

- SANDBOX_URL=http://sandbox:3000

|

||||

# 日志等级: debug, info, warn, error

|

||||

- LOG_LEVEL=info

|

||||

- STORE_LOG_LEVEL=warn

|

||||

# 工作流最大运行次数

|

||||

- WORKFLOW_MAX_RUN_TIMES=1000

|

||||

# 批量执行节点,最大输入长度

|

||||

- WORKFLOW_MAX_LOOP_TIMES=100

|

||||

# 自定义跨域,不配置时,默认都允许跨域(多个域名通过逗号分割)

|

||||

- ALLOWED_ORIGINS=

|

||||

# 是否开启IP限制,默认不开启

|

||||

- USE_IP_LIMIT=false

|

||||

volumes:

|

||||

- ./config.json:/app/data/config.json

|

||||

|

||||

# AI Proxy

|

||||

aiproxy:

|

||||

image: ghcr.io/labring/aiproxy:v0.1.5

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||

container_name: aiproxy

|

||||

restart: unless-stopped

|

||||

depends_on:

|

||||

aiproxy_pg:

|

||||

condition: service_healthy

|

||||

networks:

|

||||

- fastgpt

|

||||

environment:

|

||||

# 对应 fastgpt 里的AIPROXY_API_TOKEN

|

||||

- ADMIN_KEY=aiproxy

|

||||

# 错误日志详情保存时间(小时)

|

||||

- LOG_DETAIL_STORAGE_HOURS=1

|

||||

# 数据库连接地址

|

||||

- SQL_DSN=postgres://postgres:aiproxy@aiproxy_pg:5432/aiproxy

|

||||

# 最大重试次数

|

||||

- RETRY_TIMES=3

|

||||

# 不需要计费

|

||||

- BILLING_ENABLED=false

|

||||

# 不需要严格检测模型

|

||||

- DISABLE_MODEL_CONFIG=true

|

||||

healthcheck:

|

||||

test: ['CMD', 'curl', '-f', 'http://localhost:3000/api/status']

|

||||

interval: 5s

|

||||

timeout: 5s

|

||||

retries: 10

|

||||

aiproxy_pg:

|

||||

image: pgvector/pgvector:0.8.0-pg15 # docker hub

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/pgvector:v0.8.0-pg15 # 阿里云

|

||||

restart: unless-stopped

|

||||

container_name: aiproxy_pg

|

||||

volumes:

|

||||

- ./aiproxy_pg:/var/lib/postgresql/data

|

||||

networks:

|

||||

- fastgpt

|

||||

environment:

|

||||

TZ: Asia/Shanghai

|

||||

POSTGRES_USER: postgres

|

||||

POSTGRES_DB: aiproxy

|

||||

POSTGRES_PASSWORD: aiproxy

|

||||

healthcheck:

|

||||

test: ['CMD', 'pg_isready', '-U', 'postgres', '-d', 'aiproxy']

|

||||

interval: 5s

|

||||

timeout: 5s

|

||||

retries: 10

|

||||

networks:

|

||||

fastgpt:

|

||||

2

deploy/docker/docker-compose-oceanbase/init.sql

Normal file

@@ -0,0 +1,2 @@

|

||||

ALTER SYSTEM SET ob_vector_memory_limit_percentage = 30;

|

||||

|

||||

@@ -69,18 +69,31 @@ services:

|

||||

# 等待docker-entrypoint.sh脚本执行的MongoDB服务进程

|

||||

wait $$!

|

||||

|

||||

redis:

|

||||

image: redis:7.2-alpine

|

||||

container_name: redis

|

||||

# ports:

|

||||

# - 6379:6379

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

command: |

|

||||

redis-server --requirepass mypassword --loglevel warning --maxclients 10000 --appendonly yes --save 60 10 --maxmemory 4gb --maxmemory-policy noeviction

|

||||

volumes:

|

||||

- ./redis/data:/data

|

||||

|

||||

# fastgpt

|

||||

sandbox:

|

||||

container_name: sandbox

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.4 # 阿里云

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

fastgpt:

|

||||

container_name: fastgpt

|

||||

image: ghcr.io/labring/fastgpt:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.4 # 阿里云

|

||||

ports:

|

||||

- 3000:3000

|

||||

networks:

|

||||

@@ -114,6 +127,8 @@ services:

|

||||

- MONGODB_URI=mongodb://myusername:mypassword@mongo:27017/fastgpt?authSource=admin

|

||||

# pg 连接参数

|

||||

- PG_URL=postgresql://username:password@pg:5432/postgres

|

||||

# Redis 连接参数

|

||||

- REDIS_URL=redis://default:mypassword@redis:6379

|

||||

# sandbox 地址

|

||||

- SANDBOX_URL=http://sandbox:3000

|

||||

# 日志等级: debug, info, warn, error

|

||||

@@ -127,12 +142,15 @@ services:

|

||||

- ALLOWED_ORIGINS=

|

||||

# 是否开启IP限制,默认不开启

|

||||

- USE_IP_LIMIT=false

|

||||

# 对话文件过期天数

|

||||

- CHAT_FILE_EXPIRE_TIME=7

|

||||

volumes:

|

||||

- ./config.json:/app/data/config.json

|

||||

|

||||

# AI Proxy

|

||||

aiproxy:

|

||||

image: 'ghcr.io/labring/aiproxy:latest'

|

||||

image: ghcr.io/labring/aiproxy:v0.1.5

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||

container_name: aiproxy

|

||||

restart: unless-stopped

|

||||

depends_on:

|

||||

|

||||

@@ -51,17 +51,30 @@ services:

|

||||

|

||||

# 等待docker-entrypoint.sh脚本执行的MongoDB服务进程

|

||||

wait $$!

|

||||

redis:

|

||||

image: redis:7.2-alpine

|

||||

container_name: redis

|

||||

# ports:

|

||||

# - 6379:6379

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

command: |

|

||||

redis-server --requirepass mypassword --loglevel warning --maxclients 10000 --appendonly yes --save 60 10 --maxmemory 4gb --maxmemory-policy noeviction

|

||||

volumes:

|

||||

- ./redis/data:/data

|

||||

|

||||

sandbox:

|

||||

container_name: sandbox

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt-sandbox:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt-sandbox:v4.9.4 # 阿里云

|

||||

networks:

|

||||

- fastgpt

|

||||

restart: always

|

||||

fastgpt:

|

||||

container_name: fastgpt

|

||||

image: ghcr.io/labring/fastgpt:v4.9.0 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.0 # 阿里云

|

||||

image: ghcr.io/labring/fastgpt:v4.9.4 # git

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/fastgpt/fastgpt:v4.9.4 # 阿里云

|

||||

ports:

|

||||

- 3000:3000

|

||||

networks:

|

||||

@@ -92,6 +105,8 @@ services:

|

||||

- FILE_TOKEN_KEY=filetoken

|

||||

# MongoDB 连接参数. 用户名myusername,密码mypassword。

|

||||

- MONGODB_URI=mongodb://myusername:mypassword@mongo:27017/fastgpt?authSource=admin

|

||||

# Redis 连接参数

|

||||

- REDIS_URI=redis://default:mypassword@redis:6379

|

||||

# zilliz 连接参数

|

||||

- MILVUS_ADDRESS=zilliz_cloud_address

|

||||

- MILVUS_TOKEN=zilliz_cloud_token

|

||||

@@ -108,12 +123,15 @@ services:

|

||||

- ALLOWED_ORIGINS=

|

||||

# 是否开启IP限制,默认不开启

|

||||

- USE_IP_LIMIT=false

|

||||

# 对话文件过期天数

|

||||

- CHAT_FILE_EXPIRE_TIME=7

|

||||

volumes:

|

||||

- ./config.json:/app/data/config.json

|

||||

|

||||

# AI Proxy

|

||||

aiproxy:

|

||||

image: 'ghcr.io/labring/aiproxy:latest'

|

||||

image: ghcr.io/labring/aiproxy:v0.1.3

|

||||

# image: registry.cn-hangzhou.aliyuncs.com/labring/aiproxy:v0.1.3 # 阿里云

|

||||

container_name: aiproxy

|

||||

restart: unless-stopped

|

||||

depends_on:

|

||||

|

||||

@@ -7,7 +7,7 @@ data:

|

||||

"vectorMaxProcess": 15,

|

||||

"qaMaxProcess": 15,

|

||||

"vlmMaxProcess": 15,

|

||||

"pgHNSWEfSearch": 100

|

||||

"hnswEfSearch": 100

|

||||

},

|

||||

"llmModels": [

|

||||

{

|

||||

|

||||

BIN

docSite/assets/imgs/Ollama-aiproxy1.png

Normal file

|

After Width: | Height: | Size: 68 KiB |

BIN

docSite/assets/imgs/Ollama-aiproxy2.png

Normal file

|

After Width: | Height: | Size: 9.0 KiB |

BIN

docSite/assets/imgs/Ollama-aiproxy3.png

Normal file

|

After Width: | Height: | Size: 179 KiB |

BIN

docSite/assets/imgs/Ollama-direct1.png

Normal file

|

After Width: | Height: | Size: 72 KiB |

BIN

docSite/assets/imgs/Ollama-models1.png

Normal file

|

After Width: | Height: | Size: 20 KiB |

BIN

docSite/assets/imgs/Ollama-models2.png

Normal file

|

After Width: | Height: | Size: 138 KiB |

BIN

docSite/assets/imgs/Ollama-models3.png

Normal file

|

After Width: | Height: | Size: 122 KiB |

BIN

docSite/assets/imgs/Ollama-models4.png

Normal file

|

After Width: | Height: | Size: 124 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi1.png

Normal file

|

After Width: | Height: | Size: 94 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi2.png

Normal file

|

After Width: | Height: | Size: 57 KiB |

BIN

docSite/assets/imgs/Ollama-oneapi3 .png

Normal file

|

After Width: | Height: | Size: 76 KiB |

BIN

docSite/assets/imgs/Ollama-pull.png

Normal file

|

After Width: | Height: | Size: 26 KiB |

BIN

docSite/assets/imgs/chunkReader1.png

Normal file

|

After Width: | Height: | Size: 64 KiB |

BIN

docSite/assets/imgs/chunkReader2.jpg

Normal file

|

After Width: | Height: | Size: 159 KiB |

BIN

docSite/assets/imgs/chunkReader3.webp

Normal file

|

After Width: | Height: | Size: 71 KiB |

BIN

docSite/assets/imgs/chunkReader4.jpg

Normal file

|

After Width: | Height: | Size: 139 KiB |

BIN

docSite/assets/imgs/chunkReader5.jpg

Normal file

|

After Width: | Height: | Size: 57 KiB |

BIN

docSite/assets/imgs/chunkReader6.png

Normal file

|

After Width: | Height: | Size: 122 KiB |

BIN

docSite/assets/imgs/chunkReader7.jpg

Normal file

|

After Width: | Height: | Size: 44 KiB |

BIN

docSite/assets/imgs/chunkReader8.png

Normal file

|

After Width: | Height: | Size: 197 KiB |

BIN

docSite/assets/imgs/chunkReader9.jpg

Normal file

|

After Width: | Height: | Size: 120 KiB |

|

After Width: | Height: | Size: 170 KiB |

|

After Width: | Height: | Size: 102 KiB |

|

After Width: | Height: | Size: 70 KiB |

|

After Width: | Height: | Size: 89 KiB |

|

After Width: | Height: | Size: 87 KiB |

BIN

docSite/assets/imgs/sealos-redis1.png

Normal file

|

After Width: | Height: | Size: 284 KiB |

BIN

docSite/assets/imgs/sealos-redis2.png

Normal file

|

After Width: | Height: | Size: 294 KiB |

BIN

docSite/assets/imgs/sealos-redis3.png

Normal file

|

After Width: | Height: | Size: 86 KiB |

BIN

docSite/assets/imgs/sso1.png

Normal file

|

After Width: | Height: | Size: 133 KiB |

BIN

docSite/assets/imgs/sso10.png

Normal file

|

After Width: | Height: | Size: 124 KiB |

BIN

docSite/assets/imgs/sso11.png

Normal file

|

After Width: | Height: | Size: 117 KiB |

BIN

docSite/assets/imgs/sso12.png

Normal file

|

After Width: | Height: | Size: 79 KiB |

BIN

docSite/assets/imgs/sso13.png

Normal file

|

After Width: | Height: | Size: 319 KiB |

BIN

docSite/assets/imgs/sso14.png

Normal file

|

After Width: | Height: | Size: 174 KiB |

BIN

docSite/assets/imgs/sso15.png

Normal file

|

After Width: | Height: | Size: 3.2 KiB |

BIN

docSite/assets/imgs/sso16.png

Normal file

|

After Width: | Height: | Size: 2.7 KiB |

BIN

docSite/assets/imgs/sso17.png

Normal file

|

After Width: | Height: | Size: 3.8 KiB |

BIN

docSite/assets/imgs/sso18.png

Normal file

|

After Width: | Height: | Size: 177 KiB |

BIN

docSite/assets/imgs/sso2.png

Normal file

|

After Width: | Height: | Size: 255 KiB |

BIN

docSite/assets/imgs/sso3.png

Normal file

|

After Width: | Height: | Size: 24 KiB |

BIN

docSite/assets/imgs/sso4.png

Normal file

|

After Width: | Height: | Size: 117 KiB |

BIN

docSite/assets/imgs/sso5.png

Normal file

|

After Width: | Height: | Size: 86 KiB |

BIN

docSite/assets/imgs/sso6.png

Normal file

|

After Width: | Height: | Size: 26 KiB |

BIN

docSite/assets/imgs/sso7.png

Normal file

|

After Width: | Height: | Size: 140 KiB |

BIN

docSite/assets/imgs/sso8.png

Normal file

|

After Width: | Height: | Size: 108 KiB |

BIN

docSite/assets/imgs/sso9.png

Normal file

|

After Width: | Height: | Size: 119 KiB |

BIN

docSite/assets/imgs/sso_update1.png

Normal file

|

After Width: | Height: | Size: 39 KiB |

BIN

docSite/assets/imgs/teammode.png

Normal file

|

After Width: | Height: | Size: 265 KiB |

@@ -25,7 +25,7 @@ weight: 707

|

||||

"qaMaxProcess": 15, // 问答拆分线程数量

|

||||

"vlmMaxProcess": 15, // 图片理解模型最大处理进程

|

||||

"tokenWorkers": 50, // Token 计算线程保持数,会持续占用内存,不能设置太大。

|

||||

"pgHNSWEfSearch": 100, // 向量搜索参数。越大,搜索越精确,但是速度越慢。设置为100,有99%+精度。

|

||||

"hnswEfSearch": 100, // 向量搜索参数,仅对 PG 和 OB 生效。越大,搜索越精确,但是速度越慢。设置为100,有99%+精度。

|

||||

"customPdfParse": { // 4.9.0 新增配置

|

||||

"url": "", // 自定义 PDF 解析服务地址

|

||||

"key": "", // 自定义 PDF 解析服务密钥

|

||||

|

||||

@@ -31,9 +31,9 @@ weight: 920

|

||||

|

||||

3 个模型代码分别为:

|

||||

|

||||

1. [https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-base](https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-base)

|

||||

2. [https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-large](https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-large)

|

||||

3. [https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-v2-m3](https://github.com/labring/FastGPT/tree/main/plugins/rerank-bge/bge-reranker-v2-m3)

|

||||

1. [https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-base](https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-base)

|

||||

2. [https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-large](https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-large)

|

||||

3. [https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-v2-m3](https://github.com/labring/FastGPT/tree/main/plugins/model/rerank-bge/bge-reranker-v2-m3)

|

||||

|

||||

### 3. 安装依赖

|

||||

|

||||

|

||||

@@ -46,7 +46,7 @@ ChatGLM2-6B 是开源中英双语对话模型 ChatGLM-6B 的第二代版本,

|

||||

### 源码部署

|

||||

|

||||

1. 根据上面的环境配置配置好环境,具体教程自行 GPT;

|

||||

2. 下载 [python 文件](https://github.com/labring/FastGPT/blob/main/files/models/ChatGLM2/openai_api.py)

|

||||

2. 下载 [python 文件](https://github.com/labring/FastGPT/blob/main/plugins/model/llm-ChatGLM2/openai_api.py)

|

||||

3. 在命令行输入命令 `pip install -r requirements.txt`;

|

||||

4. 打开你需要启动的 py 文件,在代码的 `verify_token` 方法中配置 token,这里的 token 只是加一层验证,防止接口被人盗用;

|

||||

5. 执行命令 `python openai_api.py --model_name 16`。这里的数字根据上面的配置进行选择。

|

||||

|

||||

184

docSite/content/zh-cn/docs/development/custom-models/ollama.md

Normal file

@@ -0,0 +1,184 @@

|

||||

---

|

||||

title: '使用 Ollama 接入本地模型 '

|

||||

description: ' 采用 Ollama 部署自己的模型'

|

||||

icon: 'api'

|

||||

draft: false

|

||||

toc: true

|

||||

weight: 950

|

||||

---

|

||||

|

||||

[Ollama](https://ollama.com/) 是一个开源的AI大模型部署工具,专注于简化大语言模型的部署和使用,支持一键下载和运行各种大模型。

|

||||

|

||||

## 安装 Ollama

|

||||

|

||||

Ollama 本身支持多种安装方式,但是推荐使用 Docker 拉取镜像部署。如果是个人设备上安装了 Ollama 后续需要解决如何让 Docker 中 FastGPT 容器访问宿主机 Ollama的问题,较为麻烦。

|

||||

|

||||

### Docker 安装(推荐)

|

||||

|

||||

你可以使用 Ollama 官方的 Docker 镜像来一键安装和启动 Ollama 服务(确保你的机器上已经安装了 Docker),命令如下:

|

||||

|

||||

```bash

|

||||

docker pull ollama/ollama

|

||||

docker run --rm -d --name ollama -p 11434:11434 ollama/ollama

|

||||

```

|

||||

|

||||

如果你的 FastGPT 是在 Docker 中进行部署的,建议在拉取 Ollama 镜像时保证和 FastGPT 镜像处于同一网络,否则可能出现 FastGPT 无法访问的问题,命令如下:

|

||||

|

||||

```bash

|

||||

docker run --rm -d --name ollama --network (你的 Fastgpt 容器所在网络) -p 11434:11434 ollama/ollama

|

||||

```

|

||||

|

||||

### 主机安装

|

||||

|

||||

如果你不想使用 Docker ,也可以采用主机安装,以下是主机安装的一些方式。

|

||||

|

||||

#### MacOS

|

||||

|

||||

如果你使用的是 macOS,且系统中已经安装了 Homebrew 包管理器,可通过以下命令来安装 Ollama:

|

||||

|

||||

```bash

|

||||

brew install ollama

|

||||

ollama serve #安装完成后,使用该命令启动服务

|

||||

```

|

||||

|

||||

#### Linux

|

||||

|

||||

在 Linux 系统上,你可以借助包管理器来安装 Ollama。以 Ubuntu 为例,在终端执行以下命令:

|

||||

|

||||

```bash

|

||||

curl https://ollama.com/install.sh | sh #此命令会从官方网站下载并执行安装脚本。

|

||||

ollama serve #安装完成后,同样启动服务

|

||||

```

|

||||

|

||||

#### Windows

|

||||

|

||||

在 Windows 系统中,你可以从 Ollama 官方网站 下载 Windows 版本的安装程序。下载完成后,运行安装程序,按照安装向导的提示完成安装。安装完成后,在命令提示符或 PowerShell 中启动服务:

|

||||

|

||||

```bash

|

||||

ollama serve #安装完成并启动服务后,你可以在浏览器中访问 http://localhost:11434 来验证 Ollama 是否安装成功。

|

||||

```

|

||||

|

||||

#### 补充说明

|

||||

|

||||

如果你是采用的主机应用 Ollama 而不是镜像,需要确保你的 Ollama 可以监听0.0.0.0。

|

||||

|

||||

##### 1. Linxu 系统

|

||||

|

||||

如果 Ollama 作为 systemd 服务运行,打开终端,编辑 Ollama 的 systemd 服务文件,使用命令sudo systemctl edit ollama.service,在[Service]部分添加Environment="OLLAMA_HOST=0.0.0.0"。保存并退出编辑器,然后执行sudo systemctl daemon - reload和sudo systemctl restart ollama使配置生效。

|

||||

|

||||

##### 2. MacOS 系统

|

||||

|

||||

打开终端,使用launchctl setenv ollama_host "0.0.0.0"命令设置环境变量,然后重启 Ollama 应用程序以使更改生效。

|

||||

|

||||

##### 3. Windows 系统

|

||||

|

||||

通过 “开始” 菜单或搜索栏打开 “编辑系统环境变量”,在 “系统属性” 窗口中点击 “环境变量”,在 “系统变量” 部分点击 “新建”,创建一个名为OLLAMA_HOST的变量,变量值设置为0.0.0.0,点击 “确定” 保存更改,最后从 “开始” 菜单重启 Ollama 应用程序。

|

||||

|

||||

### Ollama 拉取模型镜像

|

||||

|

||||

在安装后 Ollama 后,本地是没有模型镜像的,需要自己去拉取 Ollama 中的模型镜像。命令如下:

|

||||

|

||||

```bash

|

||||

# Docker 部署需要先进容器,命令为: docker exec -it < Ollama 容器名 > /bin/sh

|

||||

ollama pull <模型名>

|

||||

```

|

||||

|

||||

|

||||

|

||||

|

||||

### 测试通信

|

||||

|

||||

在安装完成后,需要进行检测测试,首先进入 FastGPT 所在的容器,尝试访问自己的 Ollama ,命令如下:

|

||||

|

||||

```bash

|

||||

docker exec -it < FastGPT 所在的容器名 > /bin/sh

|

||||

curl http://XXX.XXX.XXX.XXX:11434 #容器部署地址为“http://<容器名>:<端口>”,主机安装地址为"http://<主机IP>:<端口>",主机IP不可为localhost

|

||||

```

|

||||

|

||||

看到访问显示自己的 Ollama 服务以及启动,说明可以正常通信。

|

||||

|

||||

## 将 Ollama 接入 FastGPT

|

||||

|

||||

### 1. 查看 Ollama 所拥有的模型

|

||||

|

||||

首先采用下述命令查看 Ollama 中所拥有的模型,

|

||||

|

||||

```bash

|

||||

# Docker 部署 Ollama,需要此命令 docker exec -it < Ollama 容器名 > /bin/sh

|

||||

ollama ls

|

||||

```

|

||||

|

||||

|

||||

|

||||

### 2. AI Proxy 接入

|

||||

|

||||

如果你采用的是 FastGPT 中的默认配置文件部署[这里](/docs/development/docker.md),即默认采用 AI Proxy 进行启动。

|

||||

|

||||

|

||||

|

||||

以及在确保你的 FastGPT 可以直接访问 Ollama 容器的情况下,无法访问,参考上文[点此跳转](#安装-ollama)的安装过程,检测是不是主机不能监测0.0.0.0,或者容器不在同一个网络。

|

||||

|

||||

|

||||

|

||||

在 FastGPT 中点击账号->模型提供商->模型配置->新增模型,添加自己的模型即可,添加模型时需要保证模型ID和 OneAPI 中的模型名称一致。详细参考[这里](/docs/development/modelConfig/intro.md)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

运行 FastGPT ,在页面中选择账号->模型提供商->模型渠道->新增渠道。之后,在渠道选择中选择 Ollama ,然后加入自己拉取的模型,填入代理地址,如果是容器中安装 Ollama ,代理地址为http://地址:端口,补充:容器部署地址为“http://<容器名>:<端口>”,主机安装地址为"http://<主机IP>:<端口>",主机IP不可为localhost

|

||||

|

||||

|

||||

|

||||

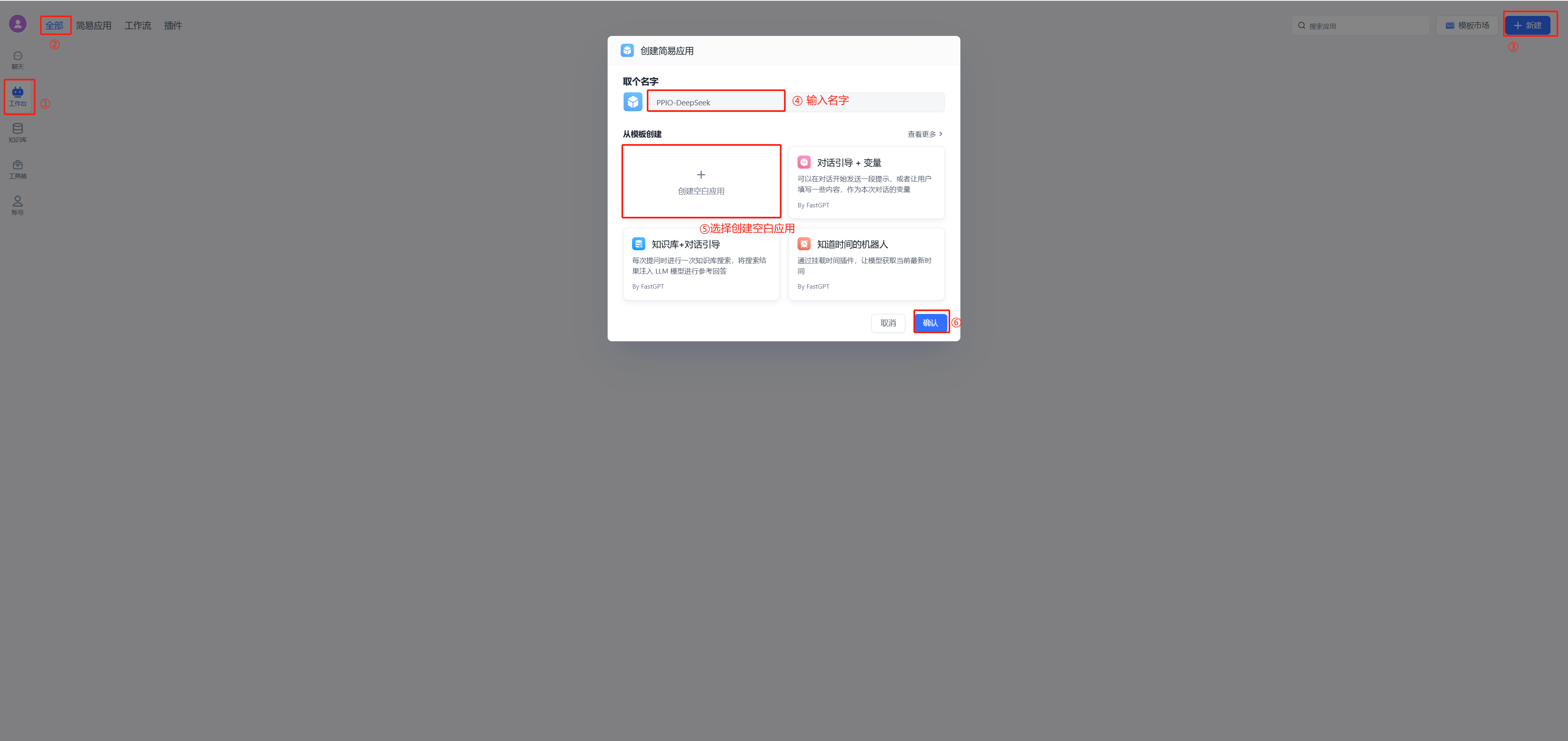

在工作台中创建一个应用,选择自己之前添加的模型,此处模型名称为自己当时设置的别名。注:同一个模型无法多次添加,系统会采取最新添加时设置的别名。

|

||||

|

||||

|

||||

|

||||

### 3. OneAPI 接入

|

||||

|

||||

如果你想使用 OneAPI ,首先需要拉取 OneAPI 镜像,然后将其在 FastGPT 容器的网络中运行。具体命令如下:

|

||||

|

||||

```bash

|

||||

# 拉取 oneAPI 镜像

|

||||

docker pull intel/oneapi-hpckit

|

||||

|

||||

# 运行容器并指定自定义网络和容器名

|

||||

docker run -it --network < FastGPT 网络 > --name 容器名 intel/oneapi-hpckit /bin/bash

|

||||

```

|

||||

|

||||

进入 OneAPI 页面,添加新的渠道,类型选择 Ollama ,在模型中填入自己 Ollama 中的模型,需要保证添加的模型名称和 Ollama 中一致,再在下方填入自己的 Ollama 代理地址,默认http://地址:端口,不需要填写/v1。添加成功后在 OneAPI 进行渠道测试,测试成功则说明添加成功。此处演示采用的是 Docker 部署 Ollama 的效果,主机 Ollama需要修改代理地址为http://<主机IP>:<端口>

|

||||

|

||||

|

||||

|

||||

渠道添加成功后,点击令牌,点击添加令牌,填写名称,修改配置。

|

||||

|

||||

|

||||

|

||||

修改部署 FastGPT 的 docker-compose.yml 文件,在其中将 AI Proxy 的使用注释,在 OPENAI_BASE_URL 中加入自己的 OneAPI 开放地址,默认是http://地址:端口/v1,v1必须填写。KEY 中填写自己在 OneAPI 的令牌。

|

||||

|

||||

|

||||

|

||||

[直接跳转5](#5-模型添加和使用)添加模型,并使用。

|

||||

|

||||

### 4. 直接接入

|

||||

|

||||

如果你既不想使用 AI Proxy,也不想使用 OneAPI,也可以选择直接接入,修改部署 FastGPT 的 docker-compose.yml 文件,在其中将 AI Proxy 的使用注释,采用和 OneAPI 的类似配置。注释掉 AIProxy 相关代码,在OPENAI_BASE_URL中加入自己的 Ollama 开放地址,默认是http://地址:端口/v1,强调:v1必须填写。在KEY中随便填入,因为 Ollama 默认没有鉴权,如果开启鉴权,请自行填写。其他操作和在 OneAPI 中加入 Ollama 一致,只需在 FastGPT 中加入自己的模型即可使用。此处演示采用的是 Docker 部署 Ollama 的效果,主机 Ollama需要修改代理地址为http://<主机IP>:<端口>

|

||||

|

||||

|

||||

|

||||

完成后[点击这里](#5-模型添加和使用)进行模型添加并使用。

|

||||

|

||||

### 5. 模型添加和使用

|

||||

|

||||

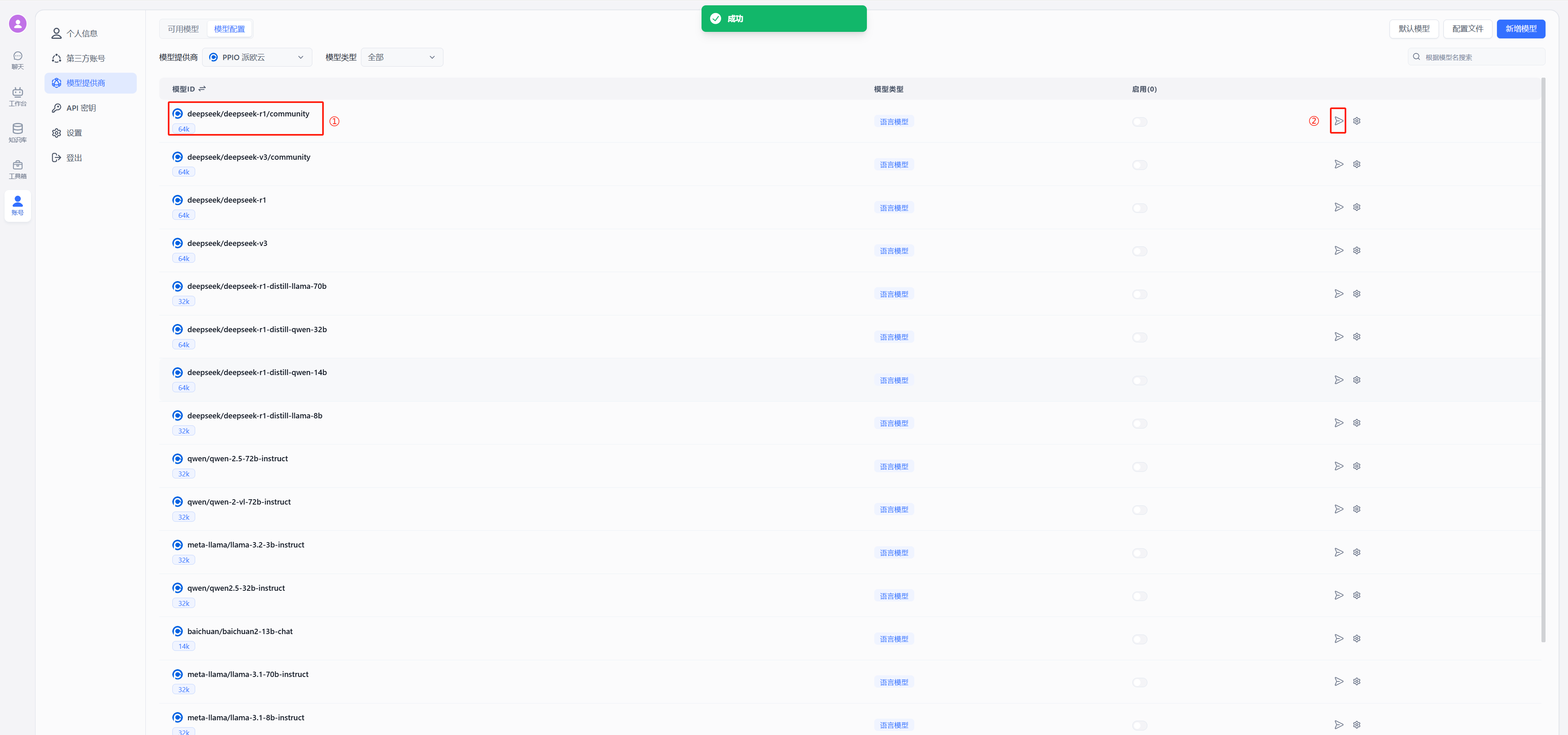

在 FastGPT 中点击账号->模型提供商->模型配置->新增模型,添加自己的模型即可,添加模型时需要保证模型ID和 OneAPI 中的模型名称一致。

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

在工作台中创建一个应用,选择自己之前添加的模型,此处模型名称为自己当时设置的别名。注:同一个模型无法多次添加,系统会采取最新添加时设置的别名。

|

||||

|

||||

|

||||

|

||||

### 6. 补充

|

||||

上述接入 Ollama 的代理地址中,主机安装 Ollama 的地址为“http://<主机IP>:<端口>”,容器部署 Ollama 地址为“http://<容器名>:<端口>”

|

||||

@@ -56,7 +56,7 @@ weight: 707

|

||||

|

||||

### zilliz cloud版本

|

||||

|

||||

Milvus 的全托管服务,性能优于 Milvus 并提供 SLA,点击使用 [Zilliz Cloud](https://zilliz.com.cn/)。

|

||||

Zilliz Cloud 由 Milvus 原厂打造,是全托管的 SaaS 向量数据库服务,性能优于 Milvus 并提供 SLA,点击使用 [Zilliz Cloud](https://zilliz.com.cn/)。

|

||||

|

||||

由于向量库使用了 Cloud,无需占用本地资源,无需太关注。

|

||||

|

||||

@@ -135,6 +135,9 @@ curl -O https://raw.githubusercontent.com/labring/FastGPT/main/projects/app/data

|

||||

|

||||

# pgvector 版本(测试推荐,简单快捷)

|

||||

curl -o docker-compose.yml https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-pgvector.yml

|

||||

# oceanbase 版本(需要将init.sql和docker-compose.yml放在同一个文件夹,方便挂载)

|

||||

# curl -o docker-compose.yml https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-oceanbase/docker-compose.yml

|

||||

# curl -o init.sql https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-oceanbase/init.sql

|

||||

# milvus 版本

|

||||

# curl -o docker-compose.yml https://raw.githubusercontent.com/labring/FastGPT/main/deploy/docker/docker-compose-milvus.yml

|

||||

# zilliz 版本

|

||||

@@ -151,6 +154,13 @@ curl -o docker-compose.yml https://raw.githubusercontent.com/labring/FastGPT/mai

|

||||

|

||||

无需操作

|

||||

|

||||

{{< /markdownify >}}

|

||||

{{< /tab >}}

|

||||

{{< tab tabName="Oceanbase版本" >}}

|

||||

{{< markdownify >}}

|

||||

|

||||

无需操作

|

||||

|

||||

{{< /markdownify >}}

|

||||

{{< /tab >}}

|

||||

{{< tab tabName="Milvus版本" >}}

|

||||

|

||||

@@ -71,7 +71,7 @@ Mongo 数据库需要注意,需要注意在连接地址中增加 `directConnec

|

||||

- `vectorMaxProcess`: 向量生成最大进程,根据数据库和 key 的并发数来决定,通常单个 120 号,2c4g 服务器设置 10~15。

|

||||

- `qaMaxProcess`: QA 生成最大进程

|

||||

- `vlmMaxProcess`: 图片理解模型最大进程

|

||||

- `pgHNSWEfSearch`: PostgreSQL vector 索引参数,越大搜索精度越高但是速度越慢,具体可看 pgvector 官方说明。

|

||||

- `hnswEfSearch`: 向量搜索参数,仅对 PG 和 OB 生效,越大搜索精度越高但是速度越慢。

|

||||

|

||||

### 5. 运行

|

||||

|

||||

|

||||

@@ -302,7 +302,7 @@ OneAPI 的语言识别接口,无法正确的识别其他模型(会始终识

|

||||

"vectorMaxProcess": 15, // 向量处理线程数量

|

||||

"qaMaxProcess": 15, // 问答拆分线程数量

|

||||

"tokenWorkers": 50, // Token 计算线程保持数,会持续占用内存,不能设置太大。

|

||||

"pgHNSWEfSearch": 100 // 向量搜索参数。越大,搜索越精确,但是速度越慢。设置为100,有99%+精度。

|

||||

"hnswEfSearch": 100 // 向量搜索参数,仅对 PG 和 OB 生效。越大,搜索越精确,但是速度越慢。设置为100,有99%+精度。

|

||||

},

|

||||

"llmModels": [

|

||||

{

|

||||

|

||||

100

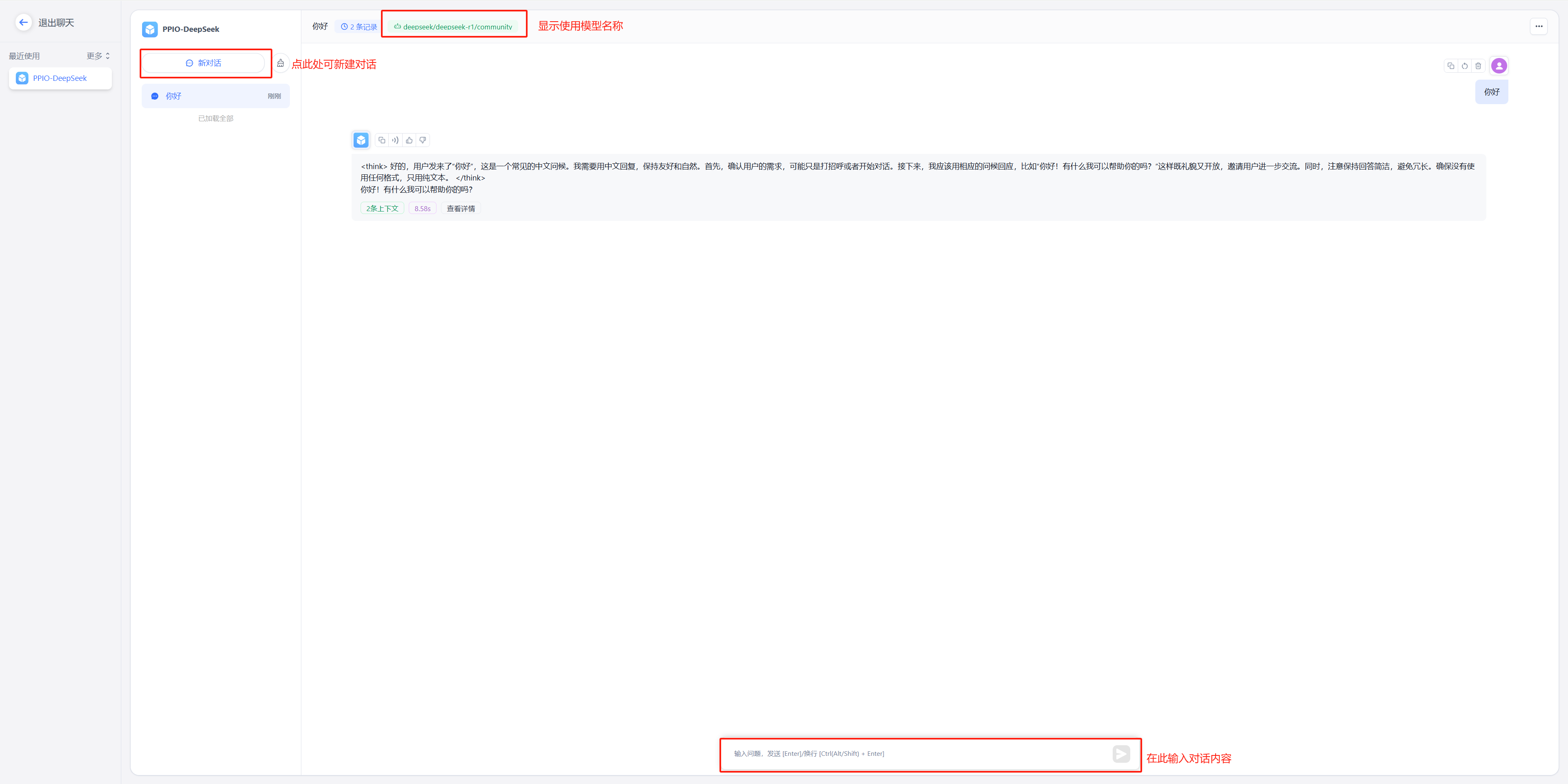

docSite/content/zh-cn/docs/development/modelConfig/ppio.md

Normal file

@@ -0,0 +1,100 @@

|

||||

---

|

||||

title: '通过 PPIO LLM API 接入模型'

|

||||

description: '通过 PPIO LLM API 接入模型'

|

||||

icon: 'api'

|

||||

draft: false

|

||||

toc: true

|

||||

weight: 747

|

||||

---

|

||||

|

||||

FastGPT 还可以通过 PPIO LLM API 接入模型。

|

||||

{{% alert context="warning" %}}

|

||||

以下内容搬运自 [FastGPT 接入 PPIO LLM API](https://ppinfra.com/docs/third-party/fastgpt-use),可能会有更新不及时的情况。

|

||||

{{% /alert %}}

|

||||

|

||||

FastGPT 是一个将 AI 开发、部署和使用全流程简化为可视化操作的平台。它使开发者不需要深入研究算法,

|

||||

用户也不需要掌握复杂技术,通过一站式服务将人工智能技术变成易于使用的工具。

|

||||

|

||||

PPIO 派欧云提供简单易用的 API 接口,让开发者能够轻松调用 DeepSeek 等模型。

|

||||

|

||||

- 对开发者:无需重构架构,3 个接口完成从文本生成到决策推理的全场景接入,像搭积木一样设计 AI 工作流;

|

||||

- 对生态:自动适配从中小应用到企业级系统的资源需求,让智能随业务自然生长。

|

||||

|

||||

下方教程提供完整接入方案(含密钥配置),帮助您快速将 FastGPT 与 PPIO API 连接起来。

|

||||

|

||||

## 1. 配置前置条件

|

||||

|

||||

(1) 获取 API 接口地址

|

||||

|

||||

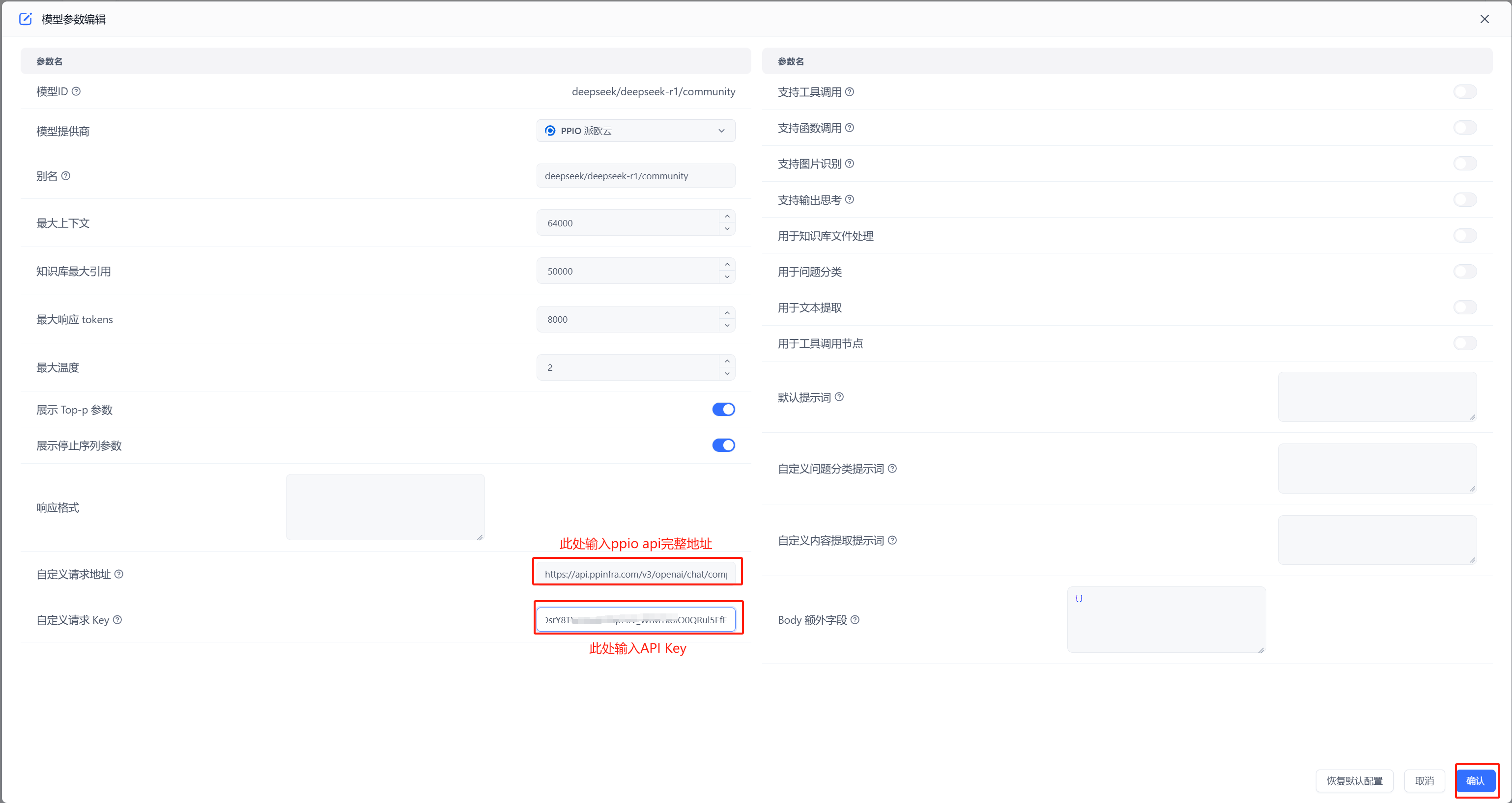

固定为: `https://api.ppinfra.com/v3/openai/chat/completions`。

|

||||

|

||||

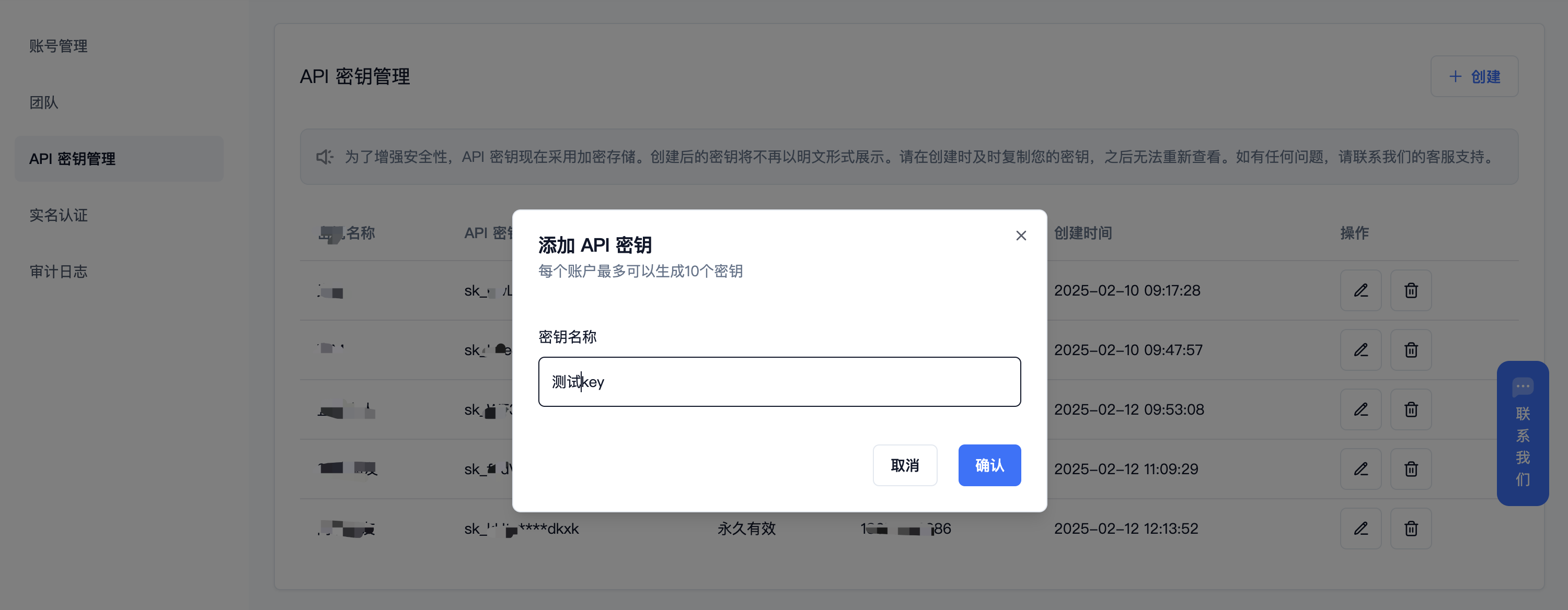

(2) 获取 【API 密钥】

|

||||

|

||||

登录派欧云控制台 [API 秘钥管理](https://www.ppinfra.com/settings/key-management) 页面,点击创建按钮。

|

||||

注册账号填写邀请码【VOJL20】得 50 代金券

|

||||

|

||||

|

||||

|

||||

(3) 生成并保存 【API 密钥】

|

||||

{{% alert context="warning" %}}

|

||||

秘钥在服务端是加密存储,请在生成时保存好秘钥;若遗失可以在控制台上删除并创建一个新的秘钥。

|

||||

{{% /alert %}}

|

||||

|

||||

|

||||

|

||||

|

||||

(4) 获取需要使用的模型 ID

|

||||

|

||||

deepseek 系列:

|

||||

|

||||

- DeepSeek R1:deepseek/deepseek-r1/community

|

||||

|

||||

- DeepSeek V3:deepseek/deepseek-v3/community

|

||||

|

||||

其他模型 ID、最大上下文及价格可参考:[模型列表](https://ppinfra.com/model-api/pricing)

|

||||

|

||||

## 2. 部署最新版 FastGPT 到本地环境

|

||||

{{% alert context="warning" %}}

|

||||

请使用 v4.8.22 以上版本,部署参考: https://doc.tryfastgpt.ai/docs/development/intro/

|

||||

{{% /alert %}}

|

||||

|

||||

## 3. 模型配置(下面两种方式二选其一)

|

||||

|

||||

(1)通过 OneAPI 接入模型 PPIO 模型: 参考 OneAPI 使用文档,修改 FastGPT 的环境变量 在 One API 生成令牌后,FastGPT 可以通过修改 baseurl 和 key 去请求到 One API,再由 One API 去请求不同的模型。修改下面两个环境变量: 务必写上 v1。如果在同一个网络内,可改成内网地址。

|

||||

|

||||

OPENAI_BASE_URL= http://OneAPI-IP:OneAPI-PORT/v1

|

||||

|

||||

下面的 key 是由 One API 提供的令牌 CHAT_API_KEY=sk-UyVQcpQWMU7ChTVl74B562C28e3c46Fe8f16E6D8AeF8736e

|

||||

|

||||

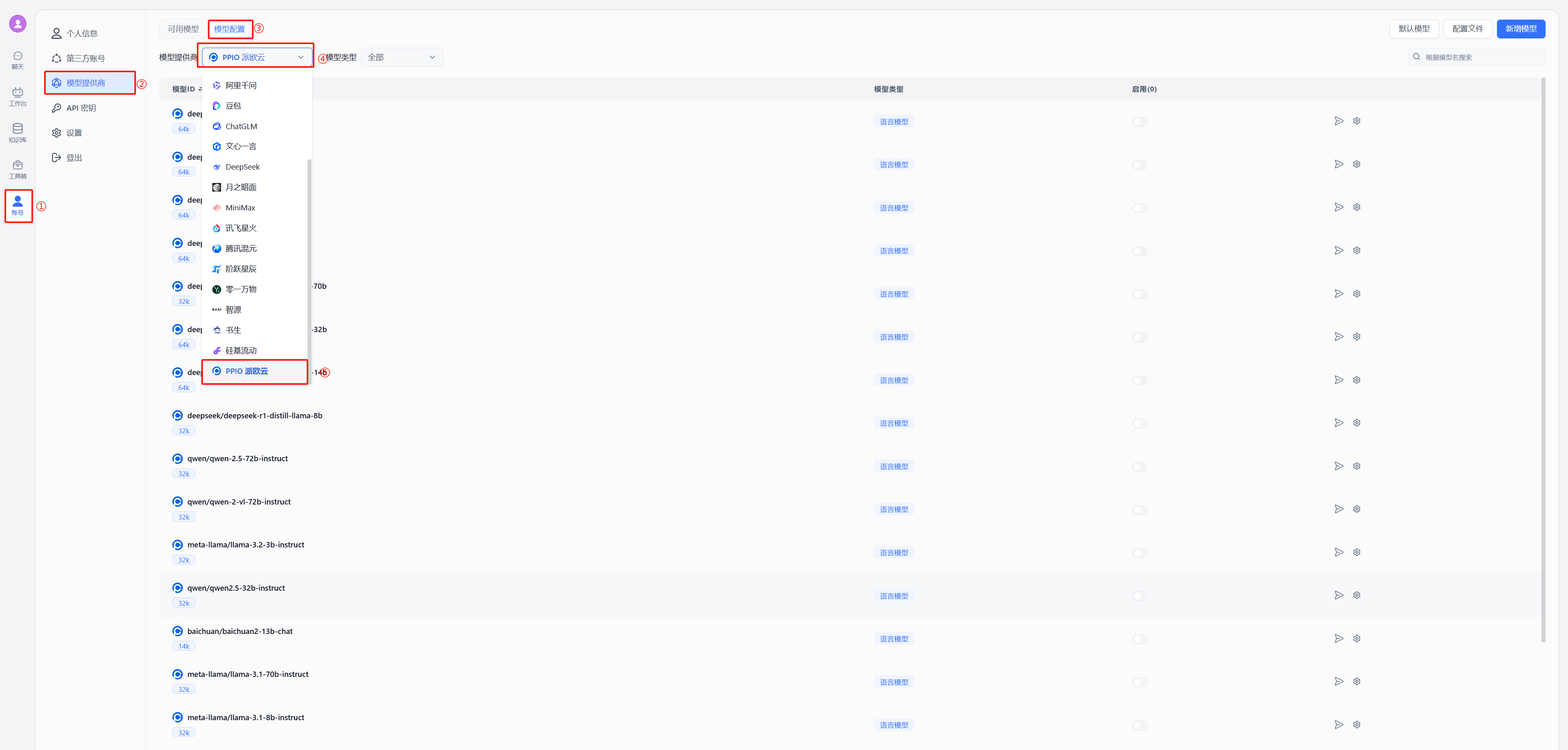

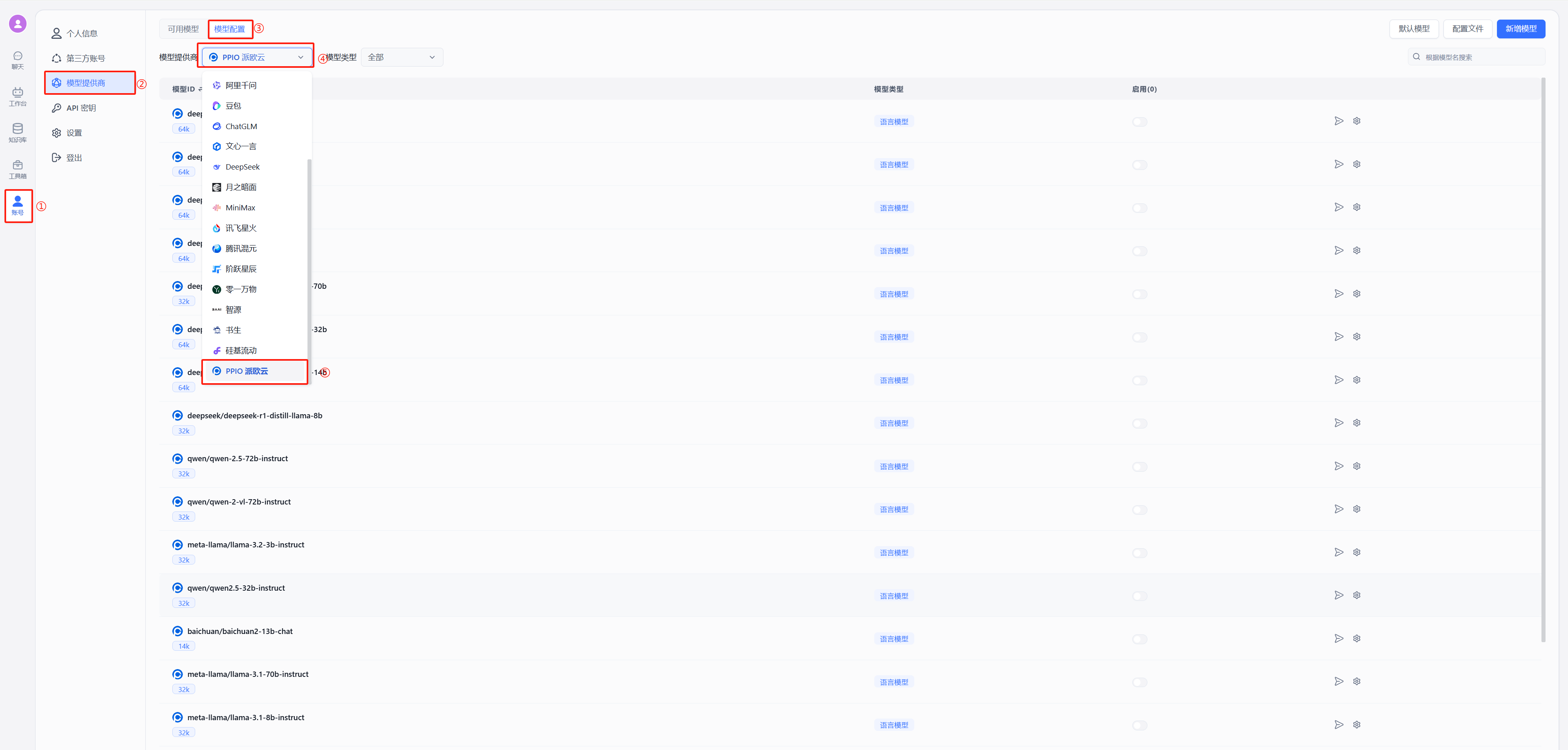

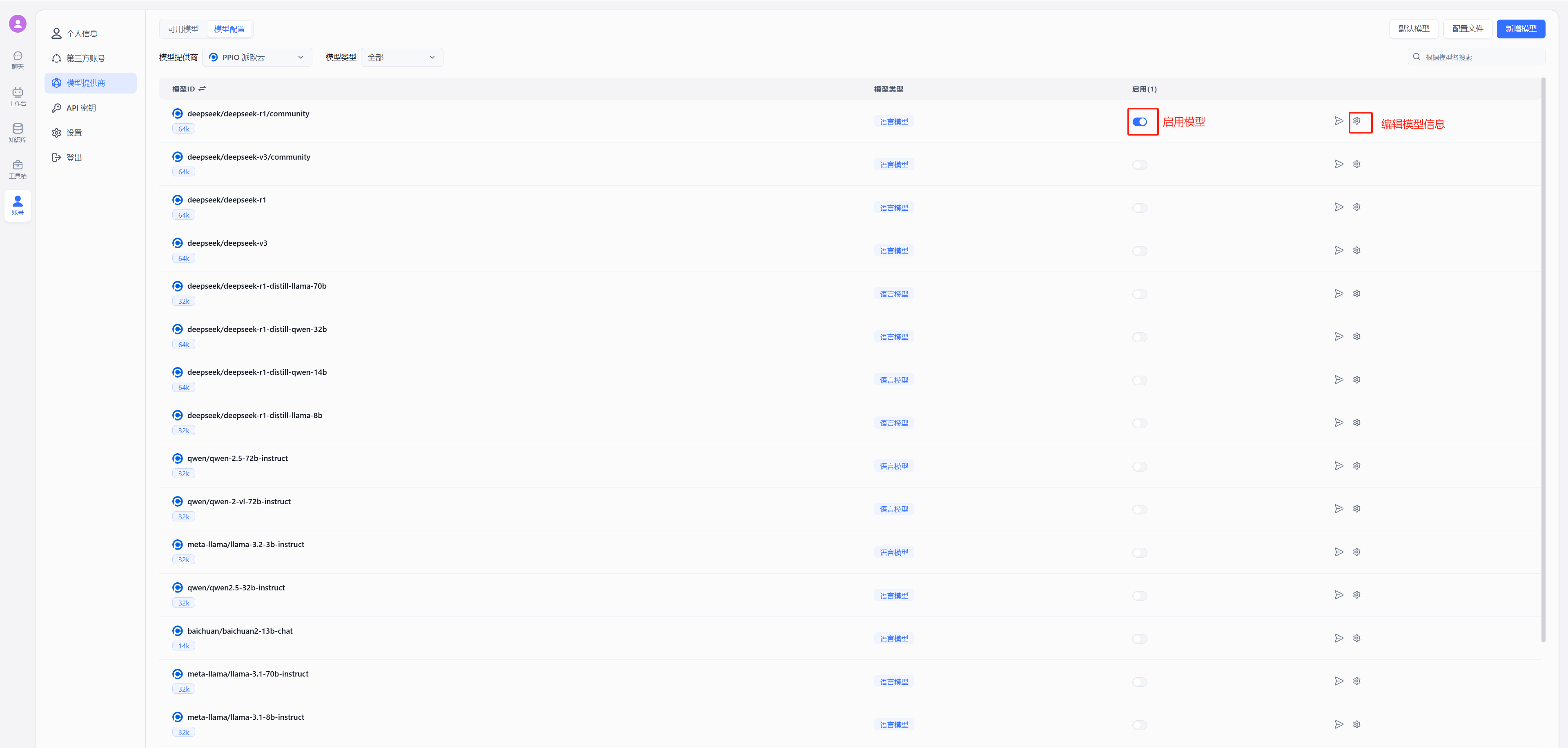

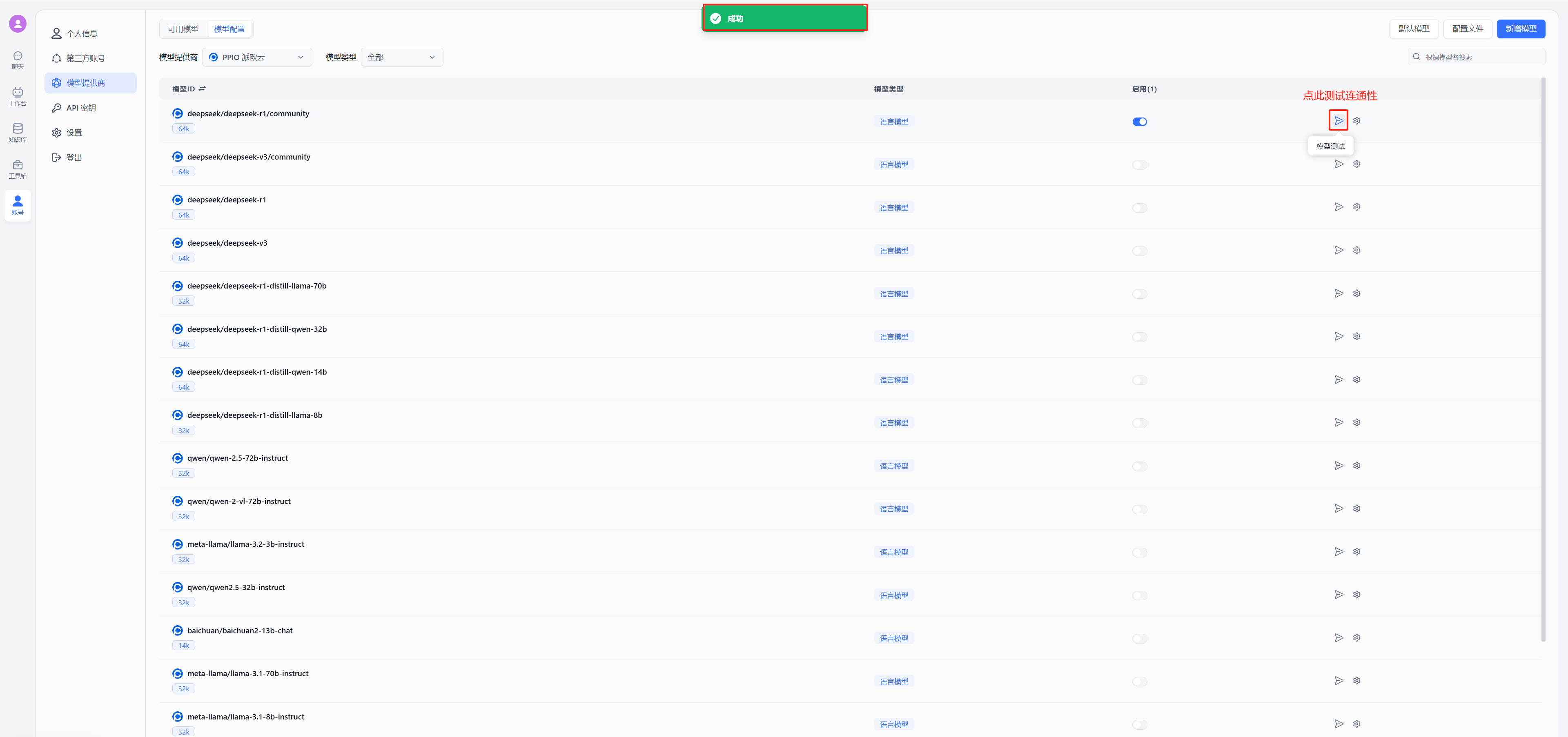

- 修改后重启 FastGPT,按下图在模型提供商中选择派欧云

|

||||

|

||||